BRF Project: India's Kaveri Engine Saga (No Discussions)

BRF Project: India's Kaveri Engine Saga (No Discussions)

This thread will serve as a repository of technical knowledge on Indian efforts to build aero engines, APUs, jet starters and other engine accessories. It will serve as references for future.

Posts and counter posts would be based on published data and state-of-art technical knowledge. All other posts including personal opinions, mere links and quotes would be deleted at sight.

This thread will be highly moderated. The moderators' judgement on whether a post will be retained or not will be final. Users posting unworthy posts repeatedly will attract warnings.

Posts and counter posts would be based on published data and state-of-art technical knowledge. All other posts including personal opinions, mere links and quotes would be deleted at sight.

This thread will be highly moderated. The moderators' judgement on whether a post will be retained or not will be final. Users posting unworthy posts repeatedly will attract warnings.

Last edited by Rahul M on 11 Feb 2014 16:25, edited 3 times in total.

Re: The Kaveri Saga - India's attempt to build a modern Turb

Kaveri is ab-initio afterburning turbofan development program of GTRE, intended to be the powerplant for the LCA.

As a follow-up to the August 1983 sanction of the development of a multi-role Light Combat Aircraft (LCA), the need of an indigenous turbofan to power it was felt. The ASR for LCA specified broad (and sometimes subjective) dimensional and performance requirements of its powerplant.

[Genesis]

The genesis of the Kaveri is quite aptly captured in the CAG: Report No. 16 of 2010 -11 (Air Force and Navy):

As a follow-up to the August 1983 sanction of the development of a multi-role Light Combat Aircraft (LCA), the need of an indigenous turbofan to power it was felt. The ASR for LCA specified broad (and sometimes subjective) dimensional and performance requirements of its powerplant.

[Genesis]

The genesis of the Kaveri is quite aptly captured in the CAG: Report No. 16 of 2010 -11 (Air Force and Navy):

...

Accordingly, there was a corresponding demand for a suitable engine for powering the LCA. Feasibility studies carried out in India and abroad revealed that there was no suitable engine available anywhere in the world, though Rolls Royce RB-1989 stage D and GEF404-F2J engines, by and large, met the requirement, provided certain concessions were granted in the Air Staff Requirements (ASR).

...

At this point of time, the Gas Turbine Research Laboratory was already working upon an aero-engine project, the GTX 37 engine, since 1982. In August 1986, a feasibility study was carried out jointly by Aeronautical Development Agency (ADA), Hindustan Aeronautics Limited (HAL) and Gas Turbine Research Establishment (GTRE) for evaluating the GTX-37 engine. The feasibility study indicated that the GTX-37 engine would, after certain rescheduling, meet the requirements of the LCA. GTRE accordingly, in December 1986, submitted a project proposal for the development of the Kaveri engine.

GTRE further proposed that it would be desirable to prove the newly designed airframe of the LCA with a proven engine first. Subsequently, the prototypes would be flown with the GTX-35 engine, as soon as this engine was type certified and cleared for the flight.

Based on the above proposal, Government sanctioned a project in March 1989 at a cost of Rs 382.81 crore with the probable date of completion (PDC) as December 1996, for the design and development of Kaveri engine.

Reams and reams have been written on the current state Kaveri, and there's no point in trying to reproduce it in detail - however the following conclusion by the same CAG report quoted above sums it up quite appropriately:The Kaveri Engine Project was sanctioned with the following basic objectives:

1) Designing and developing the GTX-35 engine to meet the specific needs of the LCA.

2) To create a full fledged indigenous base to design and develop any advanced technology engine for future military aviation programmes.

3) The engine so developed was to establish its performance integrity in various categories of tests prescribed by the aero-engine industry world over.

Despite almost two decades of development effort with an expenditure of Rs 1,892 crore, GTRE is yet to fully develop an aeroengine which meets the specific needs of the LCA. The successful culmination of the project to develop an aero-engine through indigenous efforts is now dependent upon a Joint Venture with a foreign vendor.

Last edited by maitya on 18 Jan 2014 21:36, edited 4 times in total.

Re: The Kaveri Saga - India's attempt to build a modern Turb

Guys, I think we'll go nowhere if try to debate about Kaveri/Kabini as state-of-the-art or not, is it good or bad or is built from scratch or not?

As in most things in life, all of these attributes are relative and is thus pointless to label them as absolute, and argue based on it.

So, here’s my take (in 3 parts) on how this ab initio military turbojet/fan Engine development programme should be viewed/evaluated – apologies, it became a bit too long-winded (I have broken it up into 3 parts, for page-navigation ease).

Anyway here goes …

[Part 1]

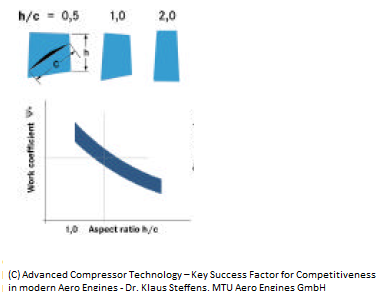

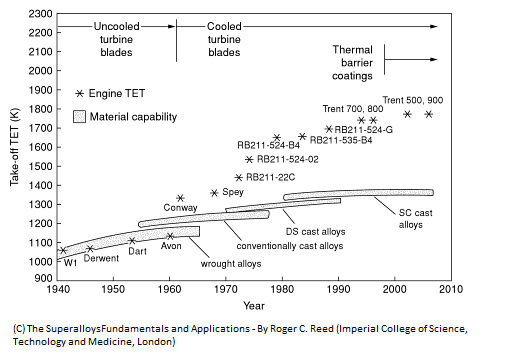

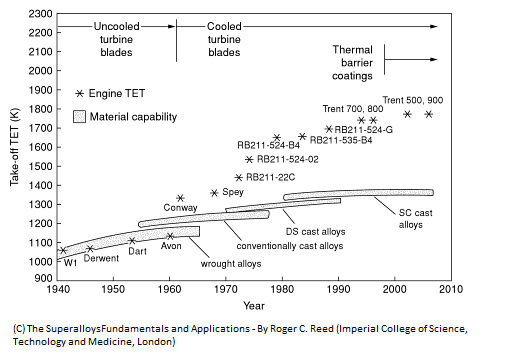

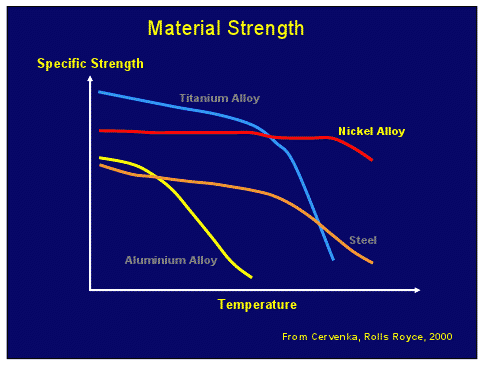

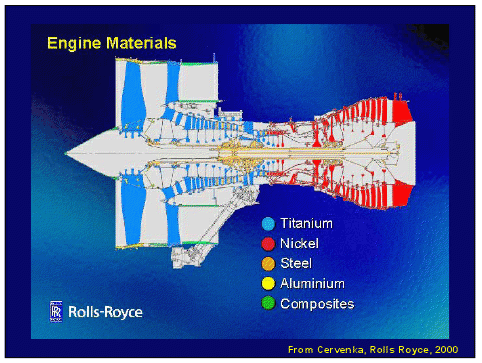

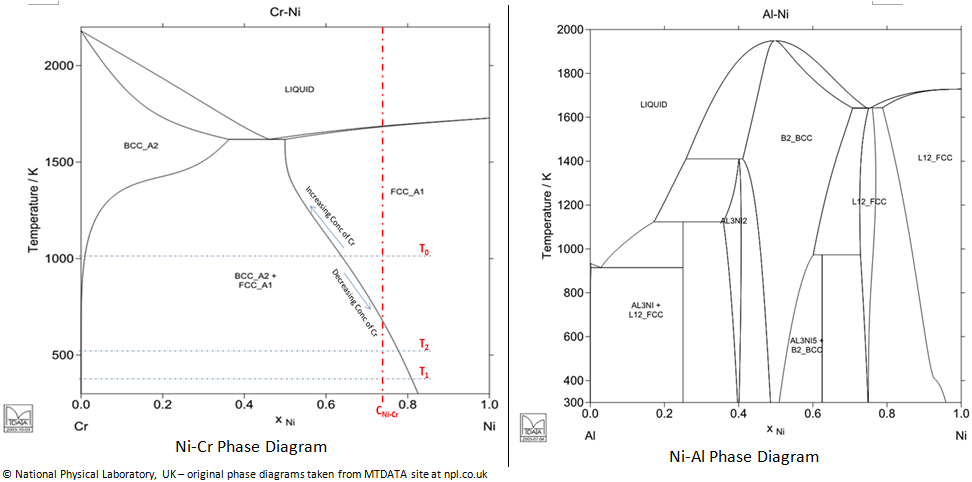

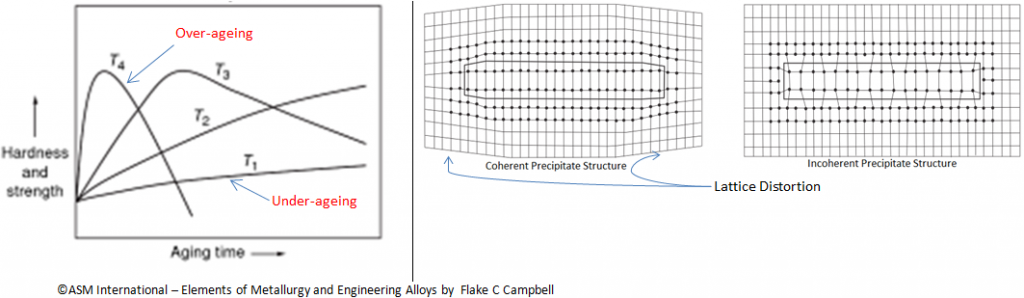

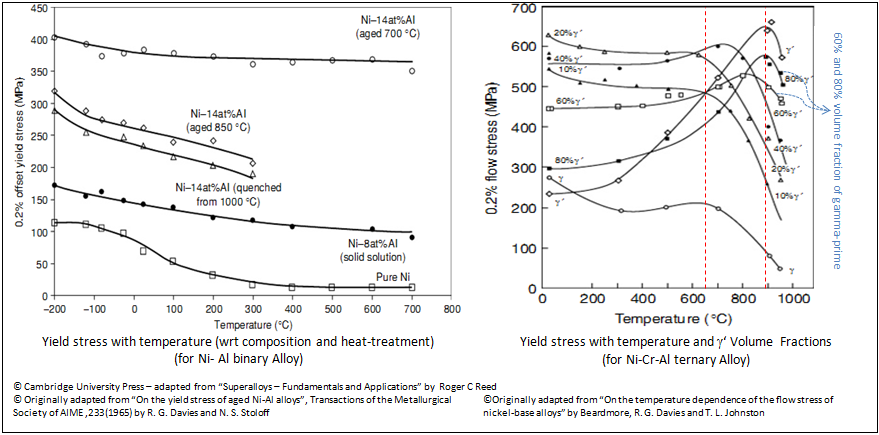

Kaveri was technology-wise contemporary till late 90s - ok, maybe even early 2000s. Directionally Solidified Casted blades, Flat rating concept, near to 1400deg C TeT, 21-22 OPR etc are all hall marks of late 1990s military engines either being unveiled and in mass-use then.

So should we label it as contemporary engine, carte blanche - hell no!!

Contemporary (1990s) Engine R&D and it’s impact on Kaveri: The thing that happened is, while we were busy developing Kabini/Kaveri, the established engine developers were all into deep R&D and prototyping of the next gen technologies, broadly in the following areas:

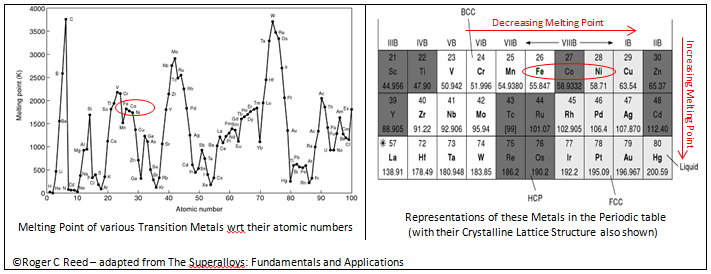

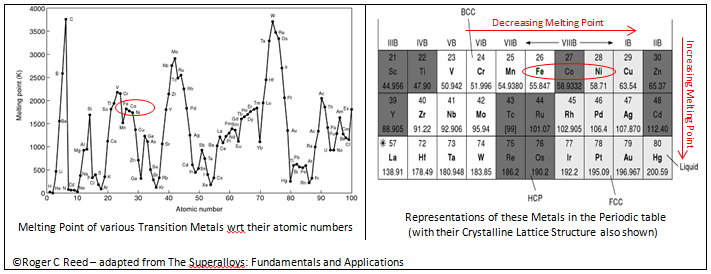

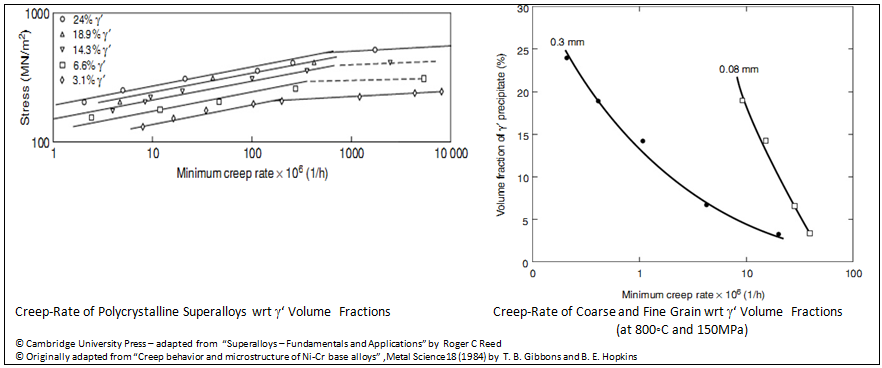

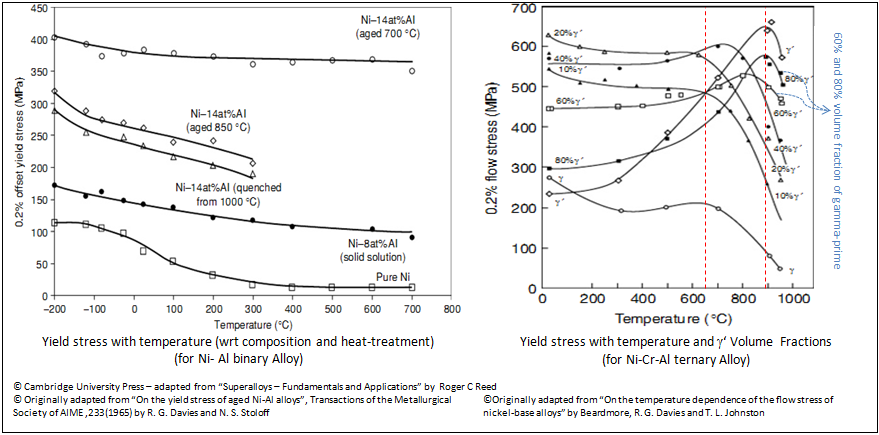

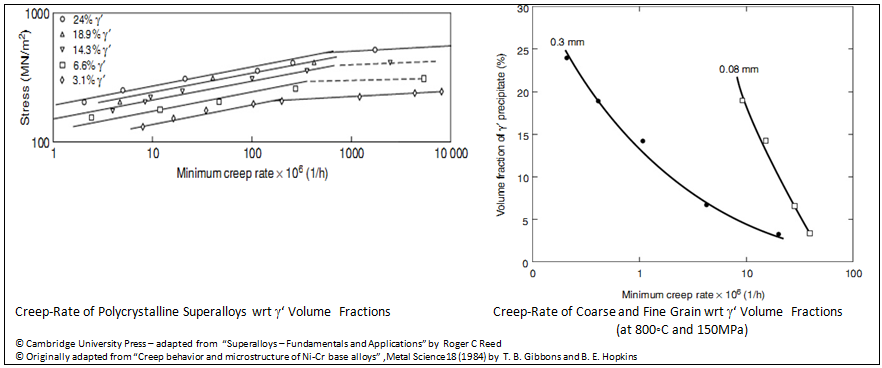

1) Turbine Blade material technology - TET increase is one of the most important factors impacting efficiency levels of turbojet/turbofan. And herein the material and casting technology able to withstand additional 300deg C operating env - SCBs partly answers that problem, but TBCs, introduction of higher temp oxidation resistance properties and multi-flow air path within the blades are areas where huge progress was made.

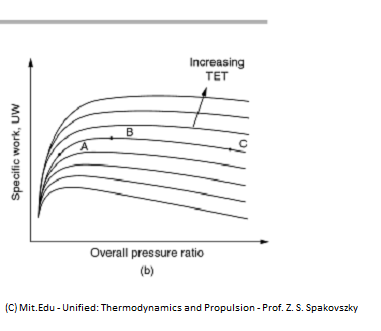

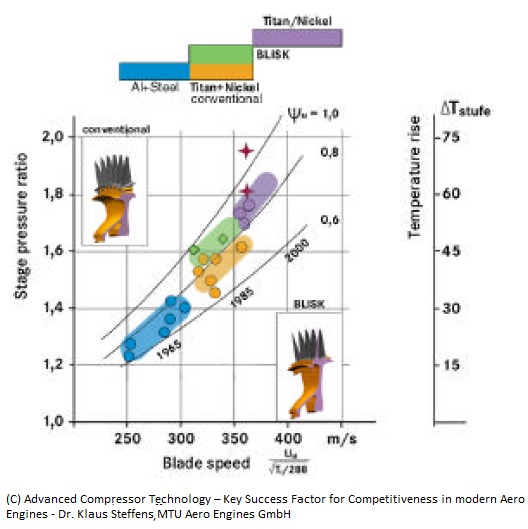

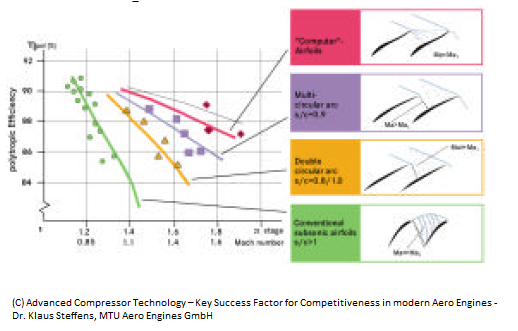

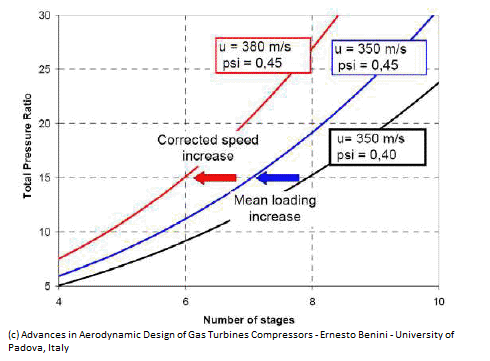

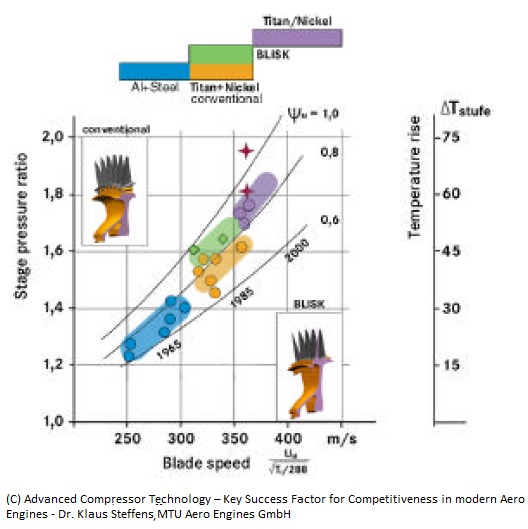

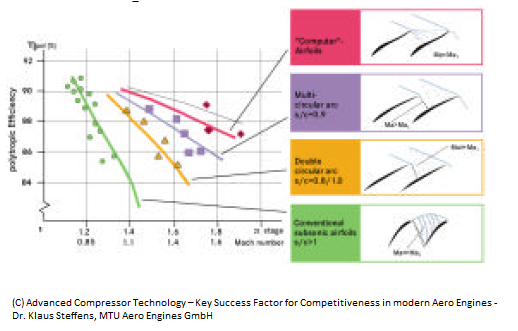

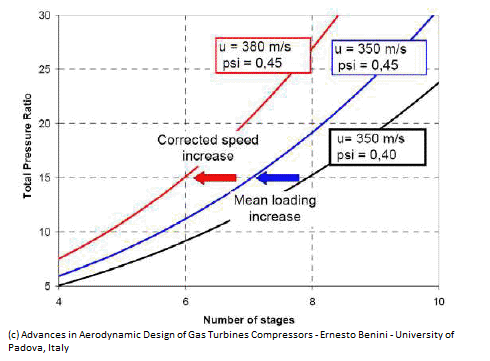

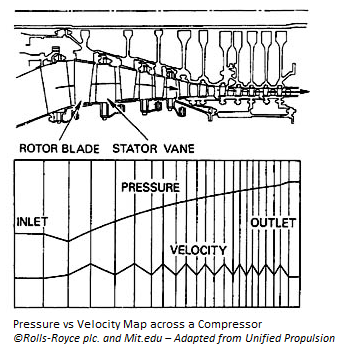

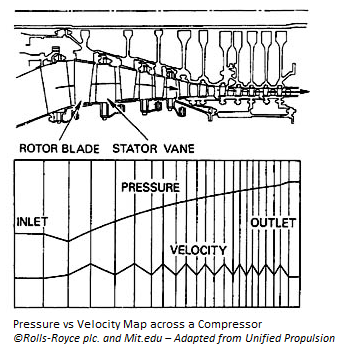

2) Compressor Design - Some sort of a re-birth of this dormant R&D area happened in the 1990s, with surge in R&D (back to basics phenomena?) in the 90s, resulting in huge (some say game-changer) advancement seen in 2000s. Compressor design gains (and to an equally important aspect of the developing an industrial manufacturing capability to translate these design gains to actual manufactured products) direct have a bearing on Pressure Ratios, the other most critical parameter in a turbojet/turbofan.

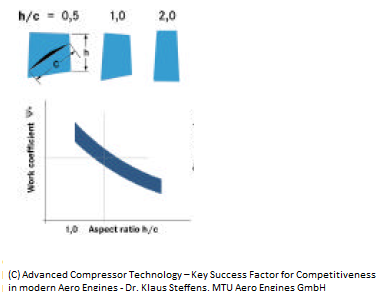

The advent of supersonic compressor blade speed (1.6M - while Kaveri is stuck at transonic level of 1.2M etc), multi-circular blade design (kaveri, I think, is at best double-circular arc design), low-aspect ratio blade design and more importantly, the advances required in manufacturing engineering technology R&D (and subsequent manufacturing engineering capability) to be able to translate these designs into a high-strength and relatively high-temp compressor blades and disks, ensured that achievement of 2000s contemporary PR levels of 28-30.

So yes, the technology levels that we see in Kaveri/Kabini are what were already available in the military engines on 1980s and 1990s - i.e. these were in R&D and developed in 1970s and 1980s. We started the R&D and development in 1990s and two decades later (slightly more than what has been achieved by the western engine design houses in 1960-70s, maybe) we have close to a working engine.

But the engines available today (F414, for example) have already incorporated the technologies that were developed in 1990s and 2000s.

One pertinent question that comes up though (partially bought out by Pentaih-ji)?

All of the above reasoning is fine and maybe even acceptable - but couldn't we have shortened these 2 decades plus development and engineering period and somehow play catch-up.

The problem that I see in the previous set of posts is trying to argue that without any experience on turbojet and turbofan engine design capability, this is impossible to have been achieved. Well, experience is a huge factor but not the only factor – one of the major reasons for where we are today, IMVHO and daresay, are the design choices (both core engine design and material/manufacturing choices and design) being made back in 1980s.

But to understand this aspect we need to go back a bit and examine the history of Kaveri engine design/development.

[contd ...]

As in most things in life, all of these attributes are relative and is thus pointless to label them as absolute, and argue based on it.

So, here’s my take (in 3 parts) on how this ab initio military turbojet/fan Engine development programme should be viewed/evaluated – apologies, it became a bit too long-winded (I have broken it up into 3 parts, for page-navigation ease).

Anyway here goes …

[Part 1]

Kaveri was technology-wise contemporary till late 90s - ok, maybe even early 2000s. Directionally Solidified Casted blades, Flat rating concept, near to 1400deg C TeT, 21-22 OPR etc are all hall marks of late 1990s military engines either being unveiled and in mass-use then.

So should we label it as contemporary engine, carte blanche - hell no!!

Contemporary (1990s) Engine R&D and it’s impact on Kaveri: The thing that happened is, while we were busy developing Kabini/Kaveri, the established engine developers were all into deep R&D and prototyping of the next gen technologies, broadly in the following areas:

1) Turbine Blade material technology - TET increase is one of the most important factors impacting efficiency levels of turbojet/turbofan. And herein the material and casting technology able to withstand additional 300deg C operating env - SCBs partly answers that problem, but TBCs, introduction of higher temp oxidation resistance properties and multi-flow air path within the blades are areas where huge progress was made.

2) Compressor Design - Some sort of a re-birth of this dormant R&D area happened in the 1990s, with surge in R&D (back to basics phenomena?) in the 90s, resulting in huge (some say game-changer) advancement seen in 2000s. Compressor design gains (and to an equally important aspect of the developing an industrial manufacturing capability to translate these design gains to actual manufactured products) direct have a bearing on Pressure Ratios, the other most critical parameter in a turbojet/turbofan.

The advent of supersonic compressor blade speed (1.6M - while Kaveri is stuck at transonic level of 1.2M etc), multi-circular blade design (kaveri, I think, is at best double-circular arc design), low-aspect ratio blade design and more importantly, the advances required in manufacturing engineering technology R&D (and subsequent manufacturing engineering capability) to be able to translate these designs into a high-strength and relatively high-temp compressor blades and disks, ensured that achievement of 2000s contemporary PR levels of 28-30.

So yes, the technology levels that we see in Kaveri/Kabini are what were already available in the military engines on 1980s and 1990s - i.e. these were in R&D and developed in 1970s and 1980s. We started the R&D and development in 1990s and two decades later (slightly more than what has been achieved by the western engine design houses in 1960-70s, maybe) we have close to a working engine.

But the engines available today (F414, for example) have already incorporated the technologies that were developed in 1990s and 2000s.

One pertinent question that comes up though (partially bought out by Pentaih-ji)?

All of the above reasoning is fine and maybe even acceptable - but couldn't we have shortened these 2 decades plus development and engineering period and somehow play catch-up.

The problem that I see in the previous set of posts is trying to argue that without any experience on turbojet and turbofan engine design capability, this is impossible to have been achieved. Well, experience is a huge factor but not the only factor – one of the major reasons for where we are today, IMVHO and daresay, are the design choices (both core engine design and material/manufacturing choices and design) being made back in 1980s.

But to understand this aspect we need to go back a bit and examine the history of Kaveri engine design/development.

[contd ...]

Re: The Kaveri Saga - India's attempt to build a modern Turb

[Part 2]

Kaveri History: Well first-of-all, it’s erroneous to assume Kaveri (or GTX-35 VS) is the absolute first turbojet/turbofan to be designed/developed from the ground up (from scratch) by GTRE – it’s not. In fact Kaveri is not at all a “from the scratch” development in the first place – it more of an upgrade.

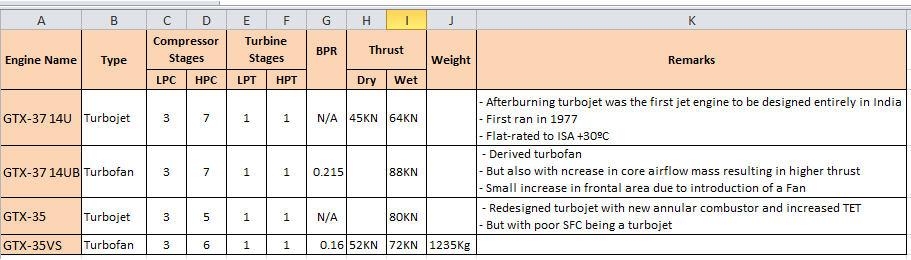

It’s predecessors were GTX-37 U (turbojet) -> GTX-37 UB (it’s turbofan version) -> GTX-35 (enhanced turbojet based on 37U tech). And Kaveri (or GTX-35 VS) is more of an upgrade of GTX-35 (same core etc.). The following schematic depicts the Kaveri lineage:

Note: How the reduction of HPC stages were carried out to reduce weight, while increasing the turbine efficiency by increasing TET (and OPR) simultaneously – all of these required adavcnes in materials tech as well. Also note, the mass-flow drop during graduating from a turbojet to turbofan necessitating further efficiencies in turbine and compressor technologies (or increase in the number of corresponding stages).

Pls note the 37 series is from 70s and early 80s while the 35 series from late 80s to early-mid 90s.

But all of these, still doesn’t make the above “lack-of-experience” argument completely void – as none of these predecessors actually flew and are more of a laboratory products (or tech demos).

Back in 1983, right after the LCA project was sanctioned, a concurrent engine evaluation study was conducted by GTRE - in 2 years time, in 1985, this was completed and the summary finding was "No contemporary engine is available world-wide that meets the LCA engine specifications".

F-404 etc were after-thought and more importantly, risk mitigating steps, which due to non-delivery of the actual engine, has now become the default engine.

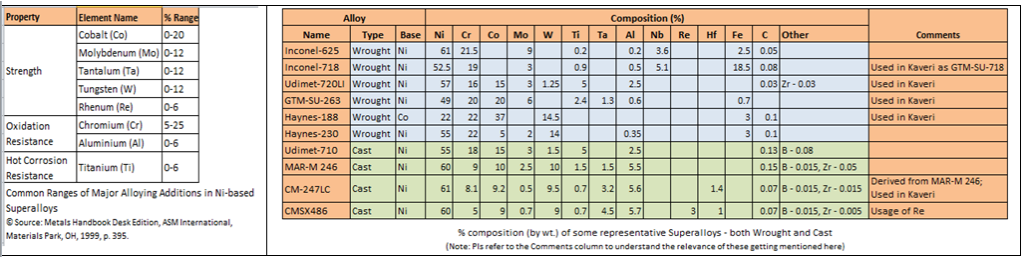

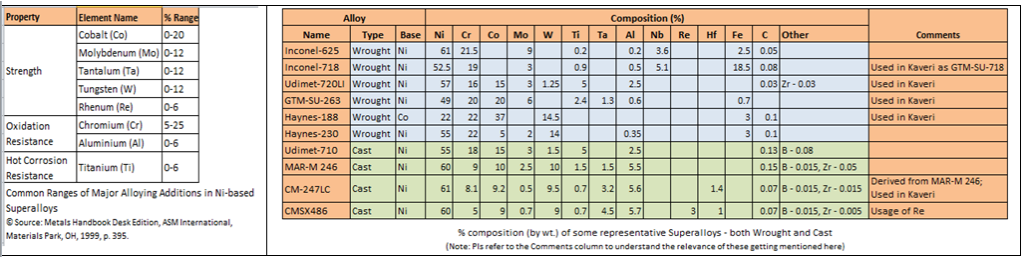

Anyway, similarly a “Materials Committee” of GTRE in 1989, after a comprehensive study of various materials of contemporary turbo-jet/fan engines, and also after taking into account the infrastructure facilities available within the country in general and production capability of MIDHANI and DMRL, recommended the development of material, batch production and type certification process etc.

The Kaveri development programme was then launched in 1989.

The Kaveri itself (actually only the core, kabini) first ran in Mar 1995 and 2 Kabini prototypes (C1 and C2) and 3 full engine prototypes (K1, K2 K3) ran between 1995 and 1998.

(contd ...)

Kaveri History: Well first-of-all, it’s erroneous to assume Kaveri (or GTX-35 VS) is the absolute first turbojet/turbofan to be designed/developed from the ground up (from scratch) by GTRE – it’s not. In fact Kaveri is not at all a “from the scratch” development in the first place – it more of an upgrade.

It’s predecessors were GTX-37 U (turbojet) -> GTX-37 UB (it’s turbofan version) -> GTX-35 (enhanced turbojet based on 37U tech). And Kaveri (or GTX-35 VS) is more of an upgrade of GTX-35 (same core etc.). The following schematic depicts the Kaveri lineage:

Note: How the reduction of HPC stages were carried out to reduce weight, while increasing the turbine efficiency by increasing TET (and OPR) simultaneously – all of these required adavcnes in materials tech as well. Also note, the mass-flow drop during graduating from a turbojet to turbofan necessitating further efficiencies in turbine and compressor technologies (or increase in the number of corresponding stages).

Pls note the 37 series is from 70s and early 80s while the 35 series from late 80s to early-mid 90s.

But all of these, still doesn’t make the above “lack-of-experience” argument completely void – as none of these predecessors actually flew and are more of a laboratory products (or tech demos).

Back in 1983, right after the LCA project was sanctioned, a concurrent engine evaluation study was conducted by GTRE - in 2 years time, in 1985, this was completed and the summary finding was "No contemporary engine is available world-wide that meets the LCA engine specifications".

F-404 etc were after-thought and more importantly, risk mitigating steps, which due to non-delivery of the actual engine, has now become the default engine.

Anyway, similarly a “Materials Committee” of GTRE in 1989, after a comprehensive study of various materials of contemporary turbo-jet/fan engines, and also after taking into account the infrastructure facilities available within the country in general and production capability of MIDHANI and DMRL, recommended the development of material, batch production and type certification process etc.

The Kaveri development programme was then launched in 1989.

The Kaveri itself (actually only the core, kabini) first ran in Mar 1995 and 2 Kabini prototypes (C1 and C2) and 3 full engine prototypes (K1, K2 K3) ran between 1995 and 1998.

(contd ...)

Re: The Kaveri Saga - India's attempt to build a modern Turb

[Part 3]

Kaveri Design Choice Rational: With this historical background in place, IMVHO, I’d speculate that what really happened in 1990 or thereabouts, while the performance and (also materials roadmap) design for Kaveri were being finalized , is the designers and technologists of the GTRE were faced with a major dilemma:

So the decision matrix then may have looked like:

1(a)2(b) ---|--- 1(a)2(a)

---------- Risk ------------>

1(b)2(a) ---|--- 1(b)2(b)

The GTRE technologists and designers chose 4th quadrant i.e. 1(b)2(b) – of course, with a hidden/inner ambition of getting to the 1st quadrant stuff concurrently and as the general technological level of the country advances in next 2 decades.

Overall Design Goals met/not-met: Pls, one word of caution towards over-emphasizing the success of dry-thrust, 90% wet thrust achievement (SFC, well, not sure) etc – yes those values are achieved, but at what weight (and maybe SFC also) penalty?

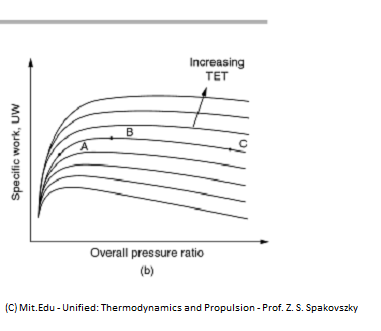

If you look at the chart above, a prev gen GTX-37UB also would have met these figures, isn’t it (with even more weight and SFC penalty).

So IMO right way of labeling Kaveri is to call it as qualified-success. As it, for the first time, if pursued with no let-up thru the flight-test-programme, will validate a flying turbofan engine – in technological terms it would

1) validate (and provide invaluable empirical data) the CFD and basic mechanical design of a twin-spool 80KN turbofan (90s level)

2) given enough design and manufacturing technological confidence of 80s level of material tech

Without these there’s no hope of leapfrogging technological gens etc (refer to epilogue section for a glimpse of that), and we're doomed to play catch-up forever.

Inference: But there-in lies the problem,

i.e. first, recall the findings of Engine evaluation study of 1985 which basically stated LCA engine specs are set high-enough to be met by a contemporary engine then. Now contrast that with the constraining technological choices (aka Conservative-Conservative) being made for Kaveri to achieve those.

This essentially means, there’s wafer-thin margin of error towards meeting both the core engine-design parameters and the enabling material/manufacturing design/technology. Even shortfall of one parameter may spell doom – and that’s precisely happened with Kaveri albeit shortfall in meeting almost all design parameters (admittedly, by small enough margin but big enough to all contribute to a compounding effect of the shortfall we see today).

But wait, before we start dishing out our advises, from our hindsight-is-20/20 vantage point, let’s try and think thru why would the GTRE folks not consider high-high risk of 1(a)2(a) approach.

Well, if you look at our national psyche of extremely naval-gazing, if-it’s-made-in-India-must-be-useless, pricing-of-tech-dev-in-terms-of-social-upliftment-missed-cost, 3-legged-cheetah-labelling-user-attitude etc. (Shivji will have a longer list), GTRE folks would be mortally scared of failures arising from such a high-risk endeavour.

Frankly, I’m not very sure if it mattered to the GTRE folks, if LCA flew or not, as long as they have met the Kaveri design parameters. So when the larger program, due to scope creep, necessitated a requirement growth of a next-gen powerplant, Kaveri in it’s present technological form is not even close to it.

Plus all this talk of new imported core etc means exactly that – a fully imported engine in terms of jet-engine tech, nothing more nothing else.

That’s the price to be paid for a pessimistic/stifling national outlook towards technological advancement with zero-tolerance towards failures and import at all cost attitude.

Epilogue: While we constantly continue to berate the GTRE folks for technological failure etc, a small bit of snippet needs understanding.

In mid-2000s, desperate to trying to reduce the overweight Kaveri (it’s still overweight by 150Kg or there-abouts), GTRE folks went ahead experimenting with the absolute cutting edge of material tech i.e. Ceramic Matrix Composites (CMC) and Polymer Matrix Composites (PMC) on some of the non-rotating-non-critical components. CMC was targeted towards a few hot-components like Nozzle divergent petals, exhaust cone etc – while PMC (high temp PMR-15 class) towards bypass duct, CD Nozzle cowls at the back etc.

The aim was to reduce weight by 30Kgs (i.e. approx. 20-25%).

In contrast, pls google around for CMC and PMC related R&D and, more importantly, it’s usage on various aero-engine by established western players (Hint: some links are there couple of pages back on this very thread).

This confidence and attitude are the true by-products of the Kaveri engine development program.

[The End]

Kaveri Design Choice Rational: With this historical background in place, IMVHO, I’d speculate that what really happened in 1990 or thereabouts, while the performance and (also materials roadmap) design for Kaveri were being finalized , is the designers and technologists of the GTRE were faced with a major dilemma:

- 1) On the design front,

- a. is it sensible to aim for the various core design parameters (e.g. OPR, TET, BPR, Combustor efficiency, supersonic compressor regimes, ultra-low aspect ratio blades, blisk manufacturing etc) of the various modern engine development programs in R&D

OR

b. to stick to the basic already understood basic design layouts of the GTX-37U and 37UBs and try and introduce medium-level of improvements on these design parameters and still meet the Kaveri specs.

- a. Aim of the materials technology being worked on at various material design houses (e.g 2nd and 3rd Gen SCBs, DS based later-stage compressor blades, 1st gen SCB based , Ceramic and Polymer Matrix based combustors and static-engine parts etc etc) and provide a quantum jump in performance parameters that was being asked from Kaveri specs

OR

b. Provide a more conservative incremental advancement in material tech (e.g. introduce Dir Solidified blades for HPT, Ti and Ni based-equiaxed-casted Compressor blades, contemporary “bolted” disk and blade interfaces, annular combustor etc) and still achieve the Kaveri specs

- a. is it sensible to aim for the various core design parameters (e.g. OPR, TET, BPR, Combustor efficiency, supersonic compressor regimes, ultra-low aspect ratio blades, blisk manufacturing etc) of the various modern engine development programs in R&D

So the decision matrix then may have looked like:

1(a)2(b) ---|--- 1(a)2(a)

---------- Risk ------------>

1(b)2(a) ---|--- 1(b)2(b)

The GTRE technologists and designers chose 4th quadrant i.e. 1(b)2(b) – of course, with a hidden/inner ambition of getting to the 1st quadrant stuff concurrently and as the general technological level of the country advances in next 2 decades.

Overall Design Goals met/not-met: Pls, one word of caution towards over-emphasizing the success of dry-thrust, 90% wet thrust achievement (SFC, well, not sure) etc – yes those values are achieved, but at what weight (and maybe SFC also) penalty?

If you look at the chart above, a prev gen GTX-37UB also would have met these figures, isn’t it (with even more weight and SFC penalty).

So IMO right way of labeling Kaveri is to call it as qualified-success. As it, for the first time, if pursued with no let-up thru the flight-test-programme, will validate a flying turbofan engine – in technological terms it would

1) validate (and provide invaluable empirical data) the CFD and basic mechanical design of a twin-spool 80KN turbofan (90s level)

2) given enough design and manufacturing technological confidence of 80s level of material tech

Without these there’s no hope of leapfrogging technological gens etc (refer to epilogue section for a glimpse of that), and we're doomed to play catch-up forever.

Inference: But there-in lies the problem,

i.e. first, recall the findings of Engine evaluation study of 1985 which basically stated LCA engine specs are set high-enough to be met by a contemporary engine then. Now contrast that with the constraining technological choices (aka Conservative-Conservative) being made for Kaveri to achieve those.

This essentially means, there’s wafer-thin margin of error towards meeting both the core engine-design parameters and the enabling material/manufacturing design/technology. Even shortfall of one parameter may spell doom – and that’s precisely happened with Kaveri albeit shortfall in meeting almost all design parameters (admittedly, by small enough margin but big enough to all contribute to a compounding effect of the shortfall we see today).

But wait, before we start dishing out our advises, from our hindsight-is-20/20 vantage point, let’s try and think thru why would the GTRE folks not consider high-high risk of 1(a)2(a) approach.

Well, if you look at our national psyche of extremely naval-gazing, if-it’s-made-in-India-must-be-useless, pricing-of-tech-dev-in-terms-of-social-upliftment-missed-cost, 3-legged-cheetah-labelling-user-attitude etc. (Shivji will have a longer list), GTRE folks would be mortally scared of failures arising from such a high-risk endeavour.

Frankly, I’m not very sure if it mattered to the GTRE folks, if LCA flew or not, as long as they have met the Kaveri design parameters. So when the larger program, due to scope creep, necessitated a requirement growth of a next-gen powerplant, Kaveri in it’s present technological form is not even close to it.

Plus all this talk of new imported core etc means exactly that – a fully imported engine in terms of jet-engine tech, nothing more nothing else.

That’s the price to be paid for a pessimistic/stifling national outlook towards technological advancement with zero-tolerance towards failures and import at all cost attitude.

Epilogue: While we constantly continue to berate the GTRE folks for technological failure etc, a small bit of snippet needs understanding.

In mid-2000s, desperate to trying to reduce the overweight Kaveri (it’s still overweight by 150Kg or there-abouts), GTRE folks went ahead experimenting with the absolute cutting edge of material tech i.e. Ceramic Matrix Composites (CMC) and Polymer Matrix Composites (PMC) on some of the non-rotating-non-critical components. CMC was targeted towards a few hot-components like Nozzle divergent petals, exhaust cone etc – while PMC (high temp PMR-15 class) towards bypass duct, CD Nozzle cowls at the back etc.

The aim was to reduce weight by 30Kgs (i.e. approx. 20-25%).

In contrast, pls google around for CMC and PMC related R&D and, more importantly, it’s usage on various aero-engine by established western players (Hint: some links are there couple of pages back on this very thread).

This confidence and attitude are the true by-products of the Kaveri engine development program.

[The End]

Last edited by maitya on 17 Jan 2014 23:42, edited 1 time in total.

Re: The Kaveri Saga - India's attempt to build a modern Turb

So where exactly are we wrt Kaveri ... the following info can be a dated (from 2009), but still acurately reflects the overall state of what has been achieved in the program so far, and more importantly, what are the various shortfalls, requiring more work and handholding.

Posting in full, k prasad's entire post (pure gold, must say) from AI09 ...

Posting in full, k prasad's entire post (pure gold, must say) from AI09 ...

k prasad wrote:Ok.... this is the GTRE story - (someone come up with sad music plz).... from the Aeroseminar.

An overview of the Kaveri situation was provided by the GTRE director, T. Mohan Rao, who was accompanied by his senior scientists. The hall was packed, and the language and tone of his speech was sadly self-depracating and pleading. Almost as if DRDO has also started losing faith - he had to explain whats going on and why its happening. Sad to see, but there are clear silver linings in the story.

1. He pointed out that the change in IAF requirements and the increase in all up wt by 2 tons killed the Kaveri as they knew it, simply because it could not in any way be able to achieve the new requirements... he was quite angry that they had been blamed for what was obviously not their fault, ie, a low-performing Kaveri for the updated reqs. Bypass Ratio is 0.16 to 0.18... he pointed out that if it had to meet the new stds, the bypass would have to be at least 0.35 to 0.45.

2. 4 Cores and 8 Kaveris built, 1800 hrs testing done.

Thrsut demonstrated: 4774 kgf dry (design value reached). 7000 kgf reheat (2.5-3% shortfall)

3. Pressure ratio - 21.5 overall.

Fan - 3 stage, 3.4 pressure ratio, Surge margin>20.

Compressor 6.4 pressure,Surge>23.

Combustor - efficiency >99%, high intensity annular combustor. Pattern factor of 0.35 and 0.14

Note: These are ACHIEVED values.

4. The present Kaveri will not power combat LCAs, although it will be fitted to an LCA within 9 months. The new program, which is the Kaveri with Snecma Eco core of 90kN will be used. The preslim design studies and configuration have beeen completed.

5.Birdhit requirements of 85% thrust after hit at 0.4-0.5 Mach have been shown and achieved.

6. He pointed out the major factor in delays being them not being given enough infrastructure and testing facilities - Govt has not given funds, babus have sat on them. Instead, they have had to go to CIAM in Russia and Anecom in Germany for tests.

He mentioned that this was the biggest problem - one of the issues they have was in engine strain and the blade throws - they tried to isolate all the causes for 3 yrs, but only when they took it to CIAM for the Non Intrusive Strain Measurement (NSMS) tests did they realize that there were excess vibrations of the 3rd order of engine frequency being developed.... imagine if the facility was there in india.

Then, the compressor tests also, it was only at the Anecom that they could see that the 1st 2 stages were surged by 20%, while the rest were "as dead as government servants" (his quote - shows how low on confidence they are i guess). He pointed out that that would have saved a lot of time and money if that facility was in india. They have since fixed the issue.

Then, the afterburner tests, (the much highlighted high altitude failure) at CIAM - the reqt is for 50% thrust boost over dry thrust at 88% efficiency. The K5 prototype failed in 2003, after working perfectly in the GTRE. They realized that they could not achieve lightup at high altitudes (Dry thrust worked ok).

They took anothe new engine block and the afterburner worked perfectly and has been certified to 15 km.

7. The good news..... they will conduct complete engine trials in CIAM in March. If these trials are successful (and they are highly confident), the Kaveri will be integrated on the LCA within 9 months.

The KADECU FADEC system with manual backup has also been fully certified.

8. The bad news again - The present requirements would need the core to pump out 15-20% more power, which is impossible... hence the eco. Not that there is anything wrong with the core.

He mentioned that otherwise, the Kaveri has met the original requirements, or will meet within the next month, and is good for all other uses except a "combat LCA" - ie, CAT, LIFT, LCA Trainer, etc.

9. When asked where we lack, he mentioned 4 key areas

a. BLISK - integrated single Blade and Disk

b. Single Crystal blades - he categorically said - We do not have that tech at all.

c. Thermal Barrier Coatings - TBC - very critical for high temp engine operation. A talk on this by an American Indian prof attracted a house full audience. He mentioned that this is highly critical and export controlled, so they dont have it.

The last two points were mentioned by Dir, DMRL as one of their areas of research, but I was not able to quiz him on it. PLEASE QUIZ ANY DMRL GUYS U MEET ON THIS.

Mohan Rao appealed that people should realize that this tech takes time, and money, and more importantly, willpower and support.... its not being given by foriegn nations, so if we have to develop, it needs support. This stance found strong support from Saraswat, Sundaram and Selvamurthy in the closing ceremony.

They are not looking at TVC just yet, and it is in the hands of other labs at the moment.

However, the ADE presentation on UCAVs showed a future Indian UCAV (2015) with no tail (MCA design), a non-conventional wingform, and a 3 axis TVC.

10. OK, some nos....

Fan - Successful tests at CIAM

Compressor: (nos in brackets are design values)

6 stage axial flow, 3 stage variable vanes with IGVs.

Corr. tip speed ~370 m/s

Inlet diam: 590 mm

Mass flow: 24.13 kg/s (24.3)

Pressure: 6.42 (6.38)

Efficiency: 85.4% (85%)

Surge %: 21.6 (20% designed)

Combustor:

Has undergone aero testing at CIAM

K8 V4 combustor is close to design.

Turbine:

Pressure = 3.6

Mass flow function= 1.1

Isentropic eff = 85%

Max. TET = 1700K

Is a success, has met design.

11. Future uses:

Navy - KMGT - 1 MW for small ships being developed, 5-6 MW KMGT is a sucess and runs on Diesel, instead of the usual kerosene aviation fuel.

The railways also wants a 7-8MW CNG run engine, which will be a challenge in terms of fuel supply, rather than teh combustion itself, which shouldn't be a problem.

Any qns???

maitya wrote:Pls note the the thrust reported in 2009 in the above post by GTRE director, T. Mohan Rao viz. Dry: 4774kgf - 46.82KN and Wet: 7000kgf - 68.65KN.

Now fast-forward to 2010-2011, and we have this:So already around 8% growth in wet thrust achieved (alongwith around 10% growth in dry thrust) - these can't be achieved without improvement in the compressor or turbine or both efficiency.From the hindu

http://www.thehindu.com/sci-tech/techno ... 127075.ece

“In recent times, the engine has been able to produce thrust of 70-75 Kilo Newton but what the IAF and other stake-holders desire is power between 90—95 KN.

“I think with the JV with Snecma in place now, we would be able to achieve these parameters in near future,” they said.

So justifiably a very good effort and something that GTRE needs to pride itself with.

But to go to 81KN stage will require anothe 7% growth in thrust which will have to involve further tweaking the compressor stages.

IMO the mass flow rate *may* continue to be the issue (no published figure so far - the above indicates the compressor mass flow) which can be resolved by increasing the compressort stage pressure ratios - i.e

1) either by making the compressor lighter (improved materials etc., so it rotates faster from the same power from turbine)

2) or by enhancing the aerodynamic efficiency of the compressor (which anyway was a compromised one, as nobody wanted to manufacture the originally designed fan-blades due to increased manufacturing complexity given the low volumes involved)

3) or by increasing the turbine efficiency (so going for higher TET but DS blades wouldn't work and will require SCB tech or by improving the blade-cooling tech)

None of the above are very easy paths to pursue and is actually the technology gen for the contemporary engines.

Talking about 90-95KN etc (interestingly, no mention of the dry thrust) is futile without goig thru the above-mentioned hoofs and, nobody would like to part with their knowhow before they themselves have moved onto the next generation technology itself.

sivab wrote:^^^ It was a known fact from 2008.

http://www.livemint.com/2008/08/1923534 ... pment.html

Mohana Rao: We have a functional engine, but there is a slight shortfall in performance. It has achieved dry thrust of 4,600kg and reheat thrust of 7,000kg in Bangalore, which is around 3,000ft above sea level. So, it would be around 5,000kg dry thrust and 7,500kg reheat thrust at sea level. The engine is short of thrust by 400kg and overweight by around 150kg. Also, we still have to perform long- endurance tests of the engine to run for many hours.

Re: The Kaveri Saga - India's attempt to build a modern Turb

One important aspect that is almost always overlooked (being less glamorous) are the dimensional specifications of Kaveri.

All performance parameters are required to be achieved within the prescribed dimensional parameters - there's no point in trying to create an engine which meets the performance parameters but exceeds the dimensional parameters like Weight, Dia, Length etc. As breaching them can make them impossible to fit in the airframe (or may require change, which normally sets back the actual program by decades) making them unusable. So what are the dimensional parameters of Kaveri?

... not exactly sure what you are trying to say.

Nowhere in the world billions of $s are spent in developing a science project, as you are alluding to, without clear-cut and exact specifications in place. And, for a turbojet/turbofan development programs, the dimensional and the performance parameters are absolutely vital on deciding which way program would go.

So just like any other turbojet/turbofan program, Kaveri also had it's performance (Th - 52/80KN, SFC - 80/207 kg/kN.h, TWR - 76N/Kg) parameters define to be achieved within the specified dimensional constraints (of L - 3.5m, D - 0.9m and Wt - 950Kg). Those performance and dimensional parameters are around which the LCA airframe dimension, strength and overall design (e.g. air-intake design) itself were specified.

PS: Also as I've pointed out in my previous post, these parameters were not only contemporary but actually world-beaters in those days - recall, how when the Kaveri design/performance-parameter-specifying-committee tried to look for another existing (or being on the verge of being put into use) military turbofan to cross-validate and baseline Kaveri’s parametric-model against what they’d have actually achieved (as opposed to copy-paste from some shiny brochures), they couldn't find any.

This should also put to rest, any notion of modesty/reticence towards the sheer technological scale this program intended to leap-frog – some may rightly say, that we aimed too high.

So anyway, if Kaveri would have met these specified parameters it would have been good enough for the LCA.

And which it almost did - except for the weight and wet thrust part, where it fell short by 10-15% - for which it can be labeled as failure etc, and GTRE folks needs to re-double their effort to regain back those shortfalls.

As after all, theoretically (for the sake of argument) if it were to be argued that Thrust is the be-all and end-all and dimensional constraints are not important, then Kaveri program itself hardly required - those thrust ratios were achieved almost a decade back by it's predecessors (GTX-37 UB tec.)

On the contrary, dimensional parameters are extremely important as it would limit the mass-flow rate, number of compressor (and even turbine) stages that can be squeezed-in, the BPR itself etc - all having direct impact on the performance parameters like Thrust, Weight, SFC etc

(PS: a couple of pages back, I've posted a very very simplified-excel-based turbojet "designing" tool - you may try and play around those 3 simple parameters and do some cause-effect kind of analysis).

- you may try and play around those 3 simple parameters and do some cause-effect kind of analysis).

But that's hardly the point.

The point that I's trying to make above was - the design choices/technological roadmap chosen (due to a various of factors discussed above) in developing Kaveri. That conservative approach meant, while the program (and these design choices) were sufficient to meet Kaveri performance specs, it provided almost nil cushion against any performance parameter scope-creep.

So when LCA got overweight by 1.2tons or so (it was originally envisaged to be a 5.5-ton class fighter), the Kaveri core couldn't provide any easy scaling-up capability of the performance parameters required to address this scope-creep. But, how can the GTRE folks be directly faulted for not able to address this scope-creep etc - they didn't have any contribution towards LCAs weight-gain, after all.

Even then, mind-you theoretically, even-now if the mass-flow can be increased, this Kaveri core can have enhanced Thrust rating as well - but all other performance parameters like SFC etc will suffer big time - due to a further reduction in Thermal Efficiency (refer ot that excel, a couple of pages back). So, such kind of stunts are always avoided.

So is there any +ves of this program - Yes, and actually there are too many to count - but all of these +ve can be very easily bought to naught, if Kaveri program is abandoned at this and not taken to it's logical conclusion of completing a comprehensive flight-test program.

With this program, we have for the first time understood and will continue to understand the FD, Mechanical design and material/manufacturing technological/engineering aspects of 80KN class twin-spool turbofan engine (this is as cutting edge as it ever gets), as the next stages of the program are undertaken - something that has taken more than half-of-century by the industrialized world to master, and no nation will ever pass-on that knowledge, however friendly they are to us.

For example (just to make a point), the SPR of a HPC stage can be dramatically improved by carefully "introducing well-shaped" intra-stage shock waves (where is N^3, when you need him most).

And given the OPR achieved so far in Kaveri is about 21 or thereabouts (against a design goal of 27), this can be a very tempting option. But who is going to tell us the blade strength, blade geometry, aspect ratio, solidity etc which helps in initiating, sustaining and optimizing it. And even if they do, who is going to tell us the material composition and physical characteristics of these HPC stage blades that will be required to achieve it - nobody, I repeat, absolutely nobody. We will have to try, fail, try again, fail again, try again and learn it the hard way.

There’s absolutely no other way.

All performance parameters are required to be achieved within the prescribed dimensional parameters - there's no point in trying to create an engine which meets the performance parameters but exceeds the dimensional parameters like Weight, Dia, Length etc. As breaching them can make them impossible to fit in the airframe (or may require change, which normally sets back the actual program by decades) making them unusable. So what are the dimensional parameters of Kaveri?

So for Kaveri how are these dimensional parameters impacting/constraining the performance shortfall of Kaveri?Length: 137.4 in (3490 mm)

Diameter: 35.8 in (910 mm)

Dry weight: 2,724 lb (1,235 kg) [Goal: 2,100-2450 lb (950-1100 kg)]

Components

Compressor: two-spool, with low-pressure (LP) and high-pressure (HP) axial compressors:

- LP compressor with 3 fan stages and transonic blading

- HP compressor with 6 stages, including variable inlet guide vanes and first two stators

Combustors: annular, with dump diffuser and air-blast fuel atomisers

Turbine: 1 LP stage and 1 HP stage

... not exactly sure what you are trying to say.

Nowhere in the world billions of $s are spent in developing a science project, as you are alluding to, without clear-cut and exact specifications in place. And, for a turbojet/turbofan development programs, the dimensional and the performance parameters are absolutely vital on deciding which way program would go.

So just like any other turbojet/turbofan program, Kaveri also had it's performance (Th - 52/80KN, SFC - 80/207 kg/kN.h, TWR - 76N/Kg) parameters define to be achieved within the specified dimensional constraints (of L - 3.5m, D - 0.9m and Wt - 950Kg). Those performance and dimensional parameters are around which the LCA airframe dimension, strength and overall design (e.g. air-intake design) itself were specified.

PS: Also as I've pointed out in my previous post, these parameters were not only contemporary but actually world-beaters in those days - recall, how when the Kaveri design/performance-parameter-specifying-committee tried to look for another existing (or being on the verge of being put into use) military turbofan to cross-validate and baseline Kaveri’s parametric-model against what they’d have actually achieved (as opposed to copy-paste from some shiny brochures), they couldn't find any.

This should also put to rest, any notion of modesty/reticence towards the sheer technological scale this program intended to leap-frog – some may rightly say, that we aimed too high.

So anyway, if Kaveri would have met these specified parameters it would have been good enough for the LCA.

And which it almost did - except for the weight and wet thrust part, where it fell short by 10-15% - for which it can be labeled as failure etc, and GTRE folks needs to re-double their effort to regain back those shortfalls.

As after all, theoretically (for the sake of argument) if it were to be argued that Thrust is the be-all and end-all and dimensional constraints are not important, then Kaveri program itself hardly required - those thrust ratios were achieved almost a decade back by it's predecessors (GTX-37 UB tec.)

On the contrary, dimensional parameters are extremely important as it would limit the mass-flow rate, number of compressor (and even turbine) stages that can be squeezed-in, the BPR itself etc - all having direct impact on the performance parameters like Thrust, Weight, SFC etc

(PS: a couple of pages back, I've posted a very very simplified-excel-based turbojet "designing" tool

But that's hardly the point.

The point that I's trying to make above was - the design choices/technological roadmap chosen (due to a various of factors discussed above) in developing Kaveri. That conservative approach meant, while the program (and these design choices) were sufficient to meet Kaveri performance specs, it provided almost nil cushion against any performance parameter scope-creep.

So when LCA got overweight by 1.2tons or so (it was originally envisaged to be a 5.5-ton class fighter), the Kaveri core couldn't provide any easy scaling-up capability of the performance parameters required to address this scope-creep. But, how can the GTRE folks be directly faulted for not able to address this scope-creep etc - they didn't have any contribution towards LCAs weight-gain, after all.

Even then, mind-you theoretically, even-now if the mass-flow can be increased, this Kaveri core can have enhanced Thrust rating as well - but all other performance parameters like SFC etc will suffer big time - due to a further reduction in Thermal Efficiency (refer ot that excel, a couple of pages back). So, such kind of stunts are always avoided.

So is there any +ves of this program - Yes, and actually there are too many to count - but all of these +ve can be very easily bought to naught, if Kaveri program is abandoned at this and not taken to it's logical conclusion of completing a comprehensive flight-test program.

With this program, we have for the first time understood and will continue to understand the FD, Mechanical design and material/manufacturing technological/engineering aspects of 80KN class twin-spool turbofan engine (this is as cutting edge as it ever gets), as the next stages of the program are undertaken - something that has taken more than half-of-century by the industrialized world to master, and no nation will ever pass-on that knowledge, however friendly they are to us.

For example (just to make a point), the SPR of a HPC stage can be dramatically improved by carefully "introducing well-shaped" intra-stage shock waves (where is N^3, when you need him most).

And given the OPR achieved so far in Kaveri is about 21 or thereabouts (against a design goal of 27), this can be a very tempting option. But who is going to tell us the blade strength, blade geometry, aspect ratio, solidity etc which helps in initiating, sustaining and optimizing it. And even if they do, who is going to tell us the material composition and physical characteristics of these HPC stage blades that will be required to achieve it - nobody, I repeat, absolutely nobody. We will have to try, fail, try again, fail again, try again and learn it the hard way.

There’s absolutely no other way.

Re: The Kaveri Saga - India's attempt to build a modern Turb

The other important aspect is to ascertain how much of an impact the Kaveri R&D subprogram failures are having an impact on the parent program - aka R&D, productionising and operationalizing of LCA.

Any failure or slippage of the vital program (as described as the "Achilles Heel", right at the inception) like that of Kaveri can render the parent LCA programme so much delayed that it loses it relevance by the time it fructifies. So it's worthwhile to understand the rough-timeline of the Kaveri development plan, it's touch-points with the LCA program and any impact (if at all) on the parent program.

So the question arises, is it true that because of non-delivery of Kaveri (with of required TWR, if I may add), the LCA program has been delayed i.e. IOC/FOC could have happened earlier had the Kaveri engine (with the required TWR) would have been made available couple of years back - maybe around 2005-6).

Kaveri engine development is part of the overall LCA program - true - however it was well understood that this aspect of the program would be singularly the riskiest one and is thus well mitigated for (hint: decision timeline for getting GE F-404 and the fact that absolutely no redesign/analysis etc required for matching the F404 engine Fans with the air-inlet design - it was as if the whole airframe was built around F404).

So whilst it is unfortunate that Kaveri program itself didn't deliver, the overall program itself (from a platform-availability to the end-user perspective) was not delayed because of this unavailability.

1. LCA was always intended to fly with F404-GE-F2J3 for the FSED-I phase i.e TD 1/2 + PV 1/2

No sane program management team would risk flying an unproven airframe with an unproven engine.

The dimensional similarity between Kaveri and F404 (e.g Dia - F404 35in vs Kaveri 35.8in, Weight F404 1036Kg vs Kaveri 950-1000Kg etc) are not mere happenstances. The "desired" requiremental dimensions of the LCA power-plant were carefully chosen (in 1987-88 etc) after proper due-diligence of what would be realistically available in 1995-99 or thereabouts.

i.e. in lay-man terms, the dimensions of the intended LCA power-plant (Kaveri) were based on those of forecasted contemporary turbofan engine (that would be available in late 90s) achieving similar Thrust, SFC and a host of other parameters.

Of course, as it is customary in such ab intio development programs, to have the intrinsic design parameters like SFC, OPR, TeT to be a notch above than those that would be available in the contemporary engines during it's developmental phases - more in sync with teh forecasted parameters of engines what would be contemporary during atleast the 1st half of the intended platform's ops life (of 2 decades +).

So not only does the rolled-out LCA TDs (TD1 in 1995 and TD-2 in 1998) had the F404-GE-F2J3 installed on them, but also they were on both PV1 and PV2 in the early 2000s.

All as per planned.

2. Also, as per the original plan (of 1987-88), if Kaveri sub-program succeeded, it was to be fitted onto LCA for the FSED-II phase (PV-3 as the production-prototype variant, PV-4 as the naval variant, and PV-5 as the trainer variant). And of course, then roll-on to the LSPs and SPs as well.

3. So while 1st phase of flight testing of the FSED-I phase was on-going (it itself got delayed due to 1998-sanctions and resultant delays in developing the FBW system), in parallel the Kaveri program was also progressing.

And the Kaveri program started off well actually - core (Kabini) first ran in 1995, full 1st prototype engine (Kaveri) began testing in 1996 and by 1998, all five prototypes (K1-K5/K6) were in testing.

But, in 2003 itself, while LCA was merely into 2 yrs into FSED-I flight testing, the indigenous HPT DS-blades started giving up. So, as a last ditch attempt, to keep the engine program on track, the DS blades were imported from Snecma (this import bit is purely IIRC and I need to cross-check it again).

4. But in mid-2004, the Kaveri failed its high-altitude tests in Russia. So it was then decided, Kaveri will not be ready for the FSED-II phases and GE was awarded a US$105 million contract (in 2004) for 17 F404-IN20 engines for LSPs and NPs (and delivery of which began in 2006).

5. The IAF ASR change (justified, in my opinion) happened in 2004/05 - this led to further delays in re-configuring the basic airframe (and thus further increased weight) to cater to it - so all FSED-II platforms were delayed towards incorporating these changes.

6. PV-1/2/3 and LSP-1 (in 2007) all flew with 2J3 version and LSP-2 with IN20 (in 2008) - followed by other LSPs.

All as per their revised schedule, dictated singularly by the program flight testing schedule - plus the delays due to re-configuring the platform due to ASR change (and of course the prototype building pace by HAL - aka "hand built" platforms).

7. And also due to pt.5 above, the resultant weight increase also made Kaveri completely unsuitable for LCA Mk1. So, in 2007, an additional 24 F404-IN20 afterburning engines were ordered to power the first operational squadron of Tejas fighters – and of course, Kaveri program itself was then officially delinked with the main LCA programme.

Any failure or slippage of the vital program (as described as the "Achilles Heel", right at the inception) like that of Kaveri can render the parent LCA programme so much delayed that it loses it relevance by the time it fructifies. So it's worthwhile to understand the rough-timeline of the Kaveri development plan, it's touch-points with the LCA program and any impact (if at all) on the parent program.

So the question arises, is it true that because of non-delivery of Kaveri (with of required TWR, if I may add), the LCA program has been delayed i.e. IOC/FOC could have happened earlier had the Kaveri engine (with the required TWR) would have been made available couple of years back - maybe around 2005-6).

Kaveri engine development is part of the overall LCA program - true - however it was well understood that this aspect of the program would be singularly the riskiest one and is thus well mitigated for (hint: decision timeline for getting GE F-404 and the fact that absolutely no redesign/analysis etc required for matching the F404 engine Fans with the air-inlet design - it was as if the whole airframe was built around F404).

So whilst it is unfortunate that Kaveri program itself didn't deliver, the overall program itself (from a platform-availability to the end-user perspective) was not delayed because of this unavailability.

Sidji thanks. Actually the TDs got rolled out with F404 much earlier than their 1st flights - details are in the following brief timeline of this:Sid wrote: ^^

Philip ji, first TD flew in 2001, PV in 2003, all with same engine 404. Also, for LPs (as per your link) it was decided to have 404 in 2003. They also first flew on 2007. All indicating high compatibility with aircraft design.

I agree with Maitya's deduction that from the beginning LCA was designed around 404 not Kaveri. Until or unless Kaveri is 404 clone and easily swappable.

1. LCA was always intended to fly with F404-GE-F2J3 for the FSED-I phase i.e TD 1/2 + PV 1/2

No sane program management team would risk flying an unproven airframe with an unproven engine.

The dimensional similarity between Kaveri and F404 (e.g Dia - F404 35in vs Kaveri 35.8in, Weight F404 1036Kg vs Kaveri 950-1000Kg etc) are not mere happenstances. The "desired" requiremental dimensions of the LCA power-plant were carefully chosen (in 1987-88 etc) after proper due-diligence of what would be realistically available in 1995-99 or thereabouts.

i.e. in lay-man terms, the dimensions of the intended LCA power-plant (Kaveri) were based on those of forecasted contemporary turbofan engine (that would be available in late 90s) achieving similar Thrust, SFC and a host of other parameters.

Of course, as it is customary in such ab intio development programs, to have the intrinsic design parameters like SFC, OPR, TeT to be a notch above than those that would be available in the contemporary engines during it's developmental phases - more in sync with teh forecasted parameters of engines what would be contemporary during atleast the 1st half of the intended platform's ops life (of 2 decades +).

So not only does the rolled-out LCA TDs (TD1 in 1995 and TD-2 in 1998) had the F404-GE-F2J3 installed on them, but also they were on both PV1 and PV2 in the early 2000s.

All as per planned.

2. Also, as per the original plan (of 1987-88), if Kaveri sub-program succeeded, it was to be fitted onto LCA for the FSED-II phase (PV-3 as the production-prototype variant, PV-4 as the naval variant, and PV-5 as the trainer variant). And of course, then roll-on to the LSPs and SPs as well.

3. So while 1st phase of flight testing of the FSED-I phase was on-going (it itself got delayed due to 1998-sanctions and resultant delays in developing the FBW system), in parallel the Kaveri program was also progressing.

And the Kaveri program started off well actually - core (Kabini) first ran in 1995, full 1st prototype engine (Kaveri) began testing in 1996 and by 1998, all five prototypes (K1-K5/K6) were in testing.

But, in 2003 itself, while LCA was merely into 2 yrs into FSED-I flight testing, the indigenous HPT DS-blades started giving up. So, as a last ditch attempt, to keep the engine program on track, the DS blades were imported from Snecma (this import bit is purely IIRC and I need to cross-check it again).

4. But in mid-2004, the Kaveri failed its high-altitude tests in Russia. So it was then decided, Kaveri will not be ready for the FSED-II phases and GE was awarded a US$105 million contract (in 2004) for 17 F404-IN20 engines for LSPs and NPs (and delivery of which began in 2006).

5. The IAF ASR change (justified, in my opinion) happened in 2004/05 - this led to further delays in re-configuring the basic airframe (and thus further increased weight) to cater to it - so all FSED-II platforms were delayed towards incorporating these changes.

6. PV-1/2/3 and LSP-1 (in 2007) all flew with 2J3 version and LSP-2 with IN20 (in 2008) - followed by other LSPs.

All as per their revised schedule, dictated singularly by the program flight testing schedule - plus the delays due to re-configuring the platform due to ASR change (and of course the prototype building pace by HAL - aka "hand built" platforms).

7. And also due to pt.5 above, the resultant weight increase also made Kaveri completely unsuitable for LCA Mk1. So, in 2007, an additional 24 F404-IN20 afterburning engines were ordered to power the first operational squadron of Tejas fighters – and of course, Kaveri program itself was then officially delinked with the main LCA programme.

Re: The Kaveri Saga - India's attempt to build a modern Turb

But the above still leaves out the confusion about sanctions etc and availability of GE F404 engines. Well, it so happens, right after the LCA program was sanctioned (in 1983), the engine feasibility study (of 1986) and Kaveri program sanction (of 1989), GTRE (actually HAL then) went ahead and bought the GE F404 engines required for the FSED-I phases (and some more).

This is corroborated in this 1989 NY Times article (By SANJOY HAZARIKA, Special to the New York Times Published: February 05, 1989) India Plans to Increase Arms Imports and Exports.

Excerpts

This is corroborated in this 1989 NY Times article (By SANJOY HAZARIKA, Special to the New York Times Published: February 05, 1989) India Plans to Increase Arms Imports and Exports.

Excerpts

Plus 1998 sanctions didn’t have much of an impact either, as these engines were already integrated into TD1 and TD2 – plus the LCA internal design was a perfect match for the dimensions of F404 (refer to my previous post on dimensional similarity aspect between Kaveri and F404), so future integrations were also not much of an issue.…

Officials also are discussing purchase of technology from France and the United States for components of a futuristic light combat aircraft planned by 1996. New Delhi already has bought several General Electric 404 engines for use on prototypes of this aircraft, opening the door to greater military cooperation more than 20 years after Washington ended arms sales to India.

…

-

UlanBatori

- BRF Oldie

- Posts: 14045

- Joined: 11 Aug 2016 06:14

Re: The Kaveri Saga - India's attempt to build a modern Turb

Quite true. So they need to either have a small team work very hard for 20 years, or have 30 teams work very hard for 2 years - sort of in Archimedes mode, as in, u don't deliver, ur head is delivered.There’s absolutely no other way.

Given that this is so crucial, why is the Indian defense establishment unable to articulate the need for this sort of effort? Or is the truth that you can't find 3 teams, let alone 30, that will actually do the work?

I have to say that in 30 years of trying, I have come to the conclusion that if there are really dedicated university-based aerospace research teams in India, I have not found them. Seems like there are a few people in DRDO and ISRO and BARC who care, but the rest seem to be just, well... I don't want to be insulting.

A couple of years ago, IISc was establishing a Pratt&Whitney Chair - did they find someone and is s(he) moving ahead to solve the engine problems?

Last edited by Indranil on 18 Jan 2014 10:26, edited 2 times in total.

Reason: No rants. No opinions. Shudh gyan please. Technical shortcomings matched to particular academic excellences would make an excellent post. In that respect are there specifics where the newly formed NACG, NMCC and DDMB teams be helpful?

Reason: No rants. No opinions. Shudh gyan please. Technical shortcomings matched to particular academic excellences would make an excellent post. In that respect are there specifics where the newly formed NACG, NMCC and DDMB teams be helpful?

Re: The Kaveri Saga - India's attempt to build a modern Turb

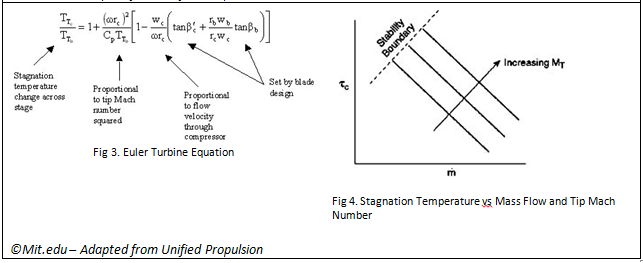

So it's high-time we try and decode where exactly is Kaveri lacking wrt not only reaching it's Dry and Wet Thrust (actually TWRs) levels but also why scaling up to GE F414 level thrusts is appearing to be such an insurmountable challenge.

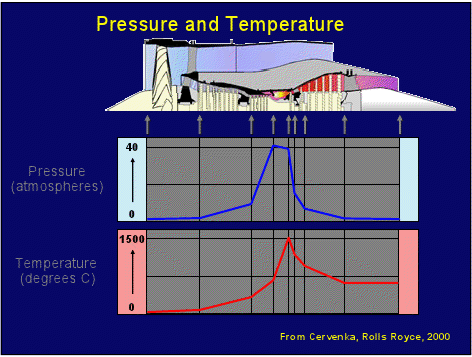

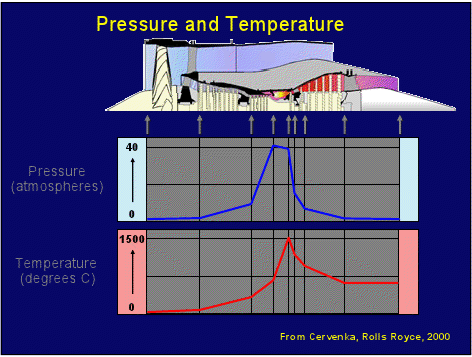

But before doing that, it's required to understand the current layout of the Kaveri wrt tempurature and pressure distn.

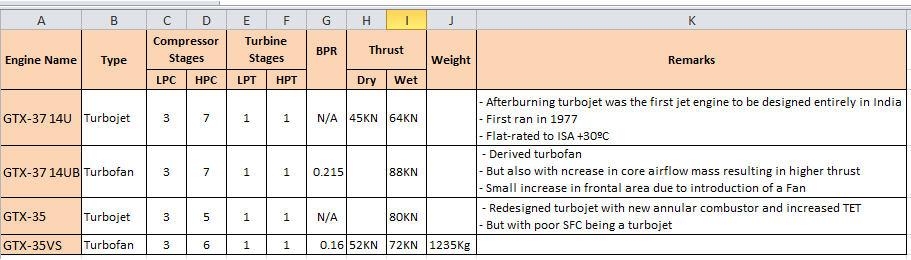

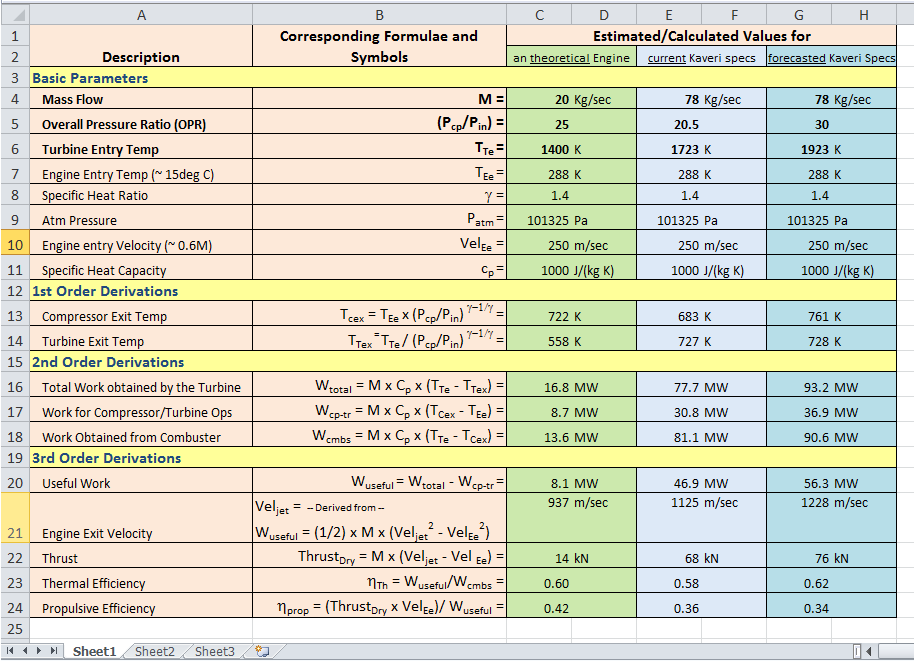

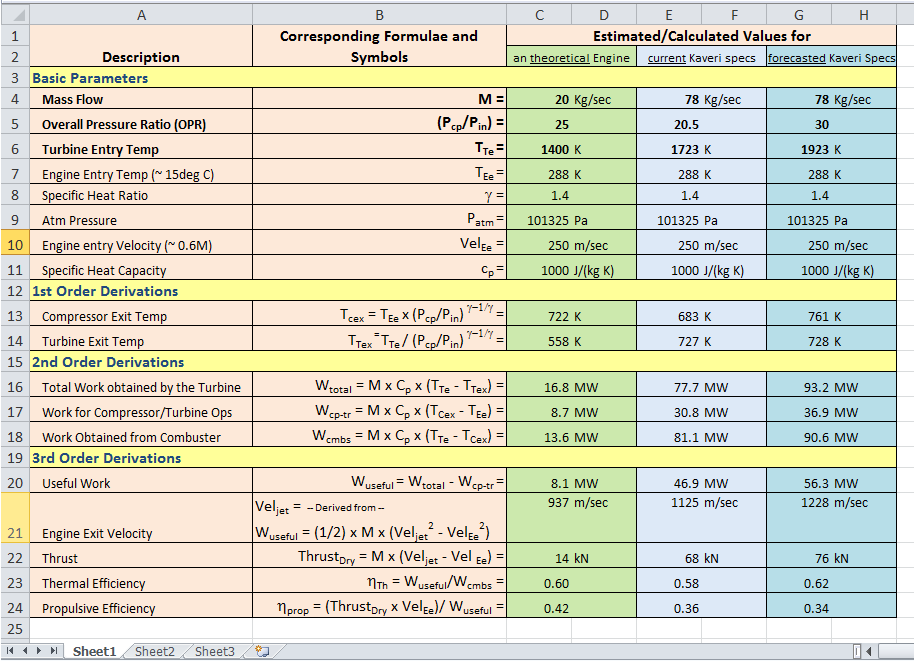

[A Simple Excel based Turbofan analysing tool]

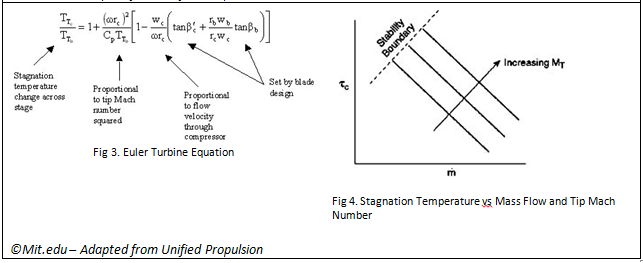

Another important aspect of discussing highly Technical aspects (like that of a Turbofan) from a lay-man point of view is the inevitable "what-if-then ..." kind of scenarios that props up from time to time e.g. it's natural to have a thought like ok, to increase the dry thrust by 10% how much mass-flow rate should be increased (all other performacne aspects being equal".

These, though being strictly theoretical exercises, do increase the understanding of a lot of aspects of the programme as a whole. The following is an humble attempt towards it:

But being a certified DOO, the method of overcoming it that appealed to me most, was to create a simple excel with the basic gas turbine parameters deriving other dependent parameters, from the 1st principles and then use these derivations and results for analysing/explaining generic gas-turbine queries/concepts/issues.

Agreed it’s over-simplified (e.g. Wcmpr = Wtrbn ) and way-too generic (e.g. both compressor and turbine stages are Isentropic

) and way-too generic (e.g. both compressor and turbine stages are Isentropic  ) to make some of the explaining even invalid, but still it helps in analyze/explaining.

) to make some of the explaining even invalid, but still it helps in analyze/explaining.

Plus of course, this allows people to play around with various permutation-combination of the 3 basic turbojet parameters (viz. OPR, TET and Mass Flow) and start deriving and designing their own “paper-turbojets”.

So here goes:

PS: Some Pir-review will be extremely welcome.

Method Used to create this tool: The method I’ve used is,

1. to first take only 3 input very basic variables (viz OPR, TeT and Mass Flow values)

2. plonk it along with other constants viz. Engine Entry Temp, Specific Heat Ratio (for a constant gas mass and volume), Atm Pressure of the engine operating scenario, Velocity of Air Intake and Specific Heat Capacity.

3. Using these, derive other basic gas-turbine parameters (depicted as 1st Level, 2nd Level and 3rd Level derivations)

4. Use the calculation/derivation process to explain away, from lay-man pov, the interplay between various turbo-jet concepts.

Note: Here-in I’d like to also mention that the,

1. Green column was more towards validating the concepts/formulas used here-in, by comparing against published calc results for a certain set of the input variable values

2. Lighter blue column is to then use these baselined calcs (and assumptions) and play-around with the open-source (read Wiki) Kaveri parameters

3. Darker Blue is to do the above (like the lighter blue column) but with the aspirational parameters of Kaveri and see where these calcs go.

So with this tool in place, I can now attempt to analyse/explain some of the turbo-jet idiosyncrasies and hopefully able to arrive at some conclusions - but that for some other day.

Disclaimer: This is much more rudimentary and humble attempt than the legendary missile-performance-predictor tool that ArunSji created years back - so pls avoid bringing it for comparision etc (if done, this stands no chance at all and will bite the dust in the first few secs itself)

PS: Can somebody help this html-challenged-foggy how to type superscript/subscript and symbol laden formulaes in the forum posting software?

But before doing that, it's required to understand the current layout of the Kaveri wrt tempurature and pressure distn.

NRao wrote:Much water has flowed under the bridge and we are where we were when it all started?

Livefist :: August 2010 :: Kaveri's Compressor Blades + The Indian Single Crystal Effort

1) That presentation (to be clear, from : India's Defence Metallurgical Research Lab (DMRL) in Hyderabad) is from 2010 (there could be newer versions out there)

2) It does not claim that the Kaveri has SCB, it very well could have if that were a fact

3) It states, like a few posters here have, that India does have access to SC technology

...

...

But, here is an interesting diagram, we now have a basic idea of temp/pressure/alloys in a Kaveri (it may have changed):

[A Simple Excel based Turbofan analysing tool]

Another important aspect of discussing highly Technical aspects (like that of a Turbofan) from a lay-man point of view is the inevitable "what-if-then ..." kind of scenarios that props up from time to time e.g. it's natural to have a thought like ok, to increase the dry thrust by 10% how much mass-flow rate should be increased (all other performacne aspects being equal".

These, though being strictly theoretical exercises, do increase the understanding of a lot of aspects of the programme as a whole. The following is an humble attempt towards it:

srin, on a slightly different but relevant note, pls appreciate that the problem of trying to analyze/explain turbojet workings strictly from a lay-man pov, is this dependence on rudimentary mathematical aspects to explain away complicated, multi-disciplinary high-funda stuff that includes exotic CeeFDee concepts like 3D NS, Boltzman Equations, Supersonic Shock Propagation, Boundary Value conditions of flow etc etc.srin wrote:Thank you maitya saar. One more question ... ?

But being a certified DOO, the method of overcoming it that appealed to me most, was to create a simple excel with the basic gas turbine parameters deriving other dependent parameters, from the 1st principles and then use these derivations and results for analysing/explaining generic gas-turbine queries/concepts/issues.

Agreed it’s over-simplified (e.g. Wcmpr = Wtrbn

Plus of course, this allows people to play around with various permutation-combination of the 3 basic turbojet parameters (viz. OPR, TET and Mass Flow) and start deriving and designing their own “paper-turbojets”.

So here goes:

PS: Some Pir-review will be extremely welcome.

Method Used to create this tool: The method I’ve used is,

1. to first take only 3 input very basic variables (viz OPR, TeT and Mass Flow values)

2. plonk it along with other constants viz. Engine Entry Temp, Specific Heat Ratio (for a constant gas mass and volume), Atm Pressure of the engine operating scenario, Velocity of Air Intake and Specific Heat Capacity.

3. Using these, derive other basic gas-turbine parameters (depicted as 1st Level, 2nd Level and 3rd Level derivations)

4. Use the calculation/derivation process to explain away, from lay-man pov, the interplay between various turbo-jet concepts.

Note: Here-in I’d like to also mention that the,

1. Green column was more towards validating the concepts/formulas used here-in, by comparing against published calc results for a certain set of the input variable values

2. Lighter blue column is to then use these baselined calcs (and assumptions) and play-around with the open-source (read Wiki) Kaveri parameters

3. Darker Blue is to do the above (like the lighter blue column) but with the aspirational parameters of Kaveri and see where these calcs go.

So with this tool in place, I can now attempt to analyse/explain some of the turbo-jet idiosyncrasies and hopefully able to arrive at some conclusions - but that for some other day.

Disclaimer: This is much more rudimentary and humble attempt than the legendary missile-performance-predictor tool that ArunSji created years back - so pls avoid bringing it for comparision etc (if done, this stands no chance at all and will bite the dust in the first few secs itself)

PS: Can somebody help this html-challenged-foggy how to type superscript/subscript and symbol laden formulaes in the forum posting software?

Last edited by maitya on 19 Jan 2014 09:38, edited 3 times in total.

Re: The Kaveri Saga - India's attempt to build a modern Turb

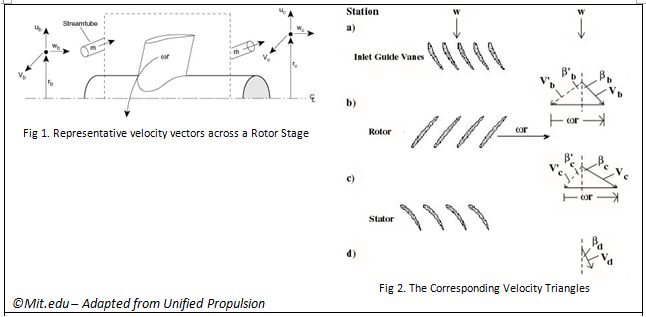

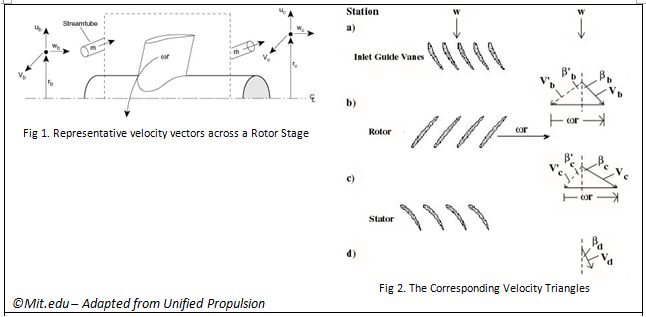

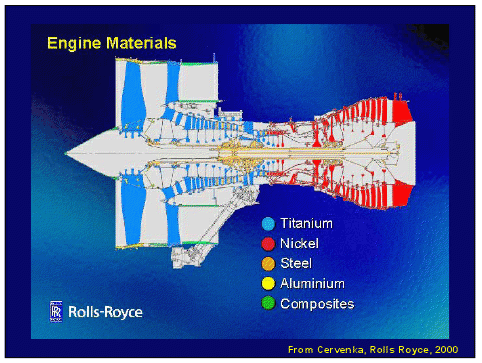

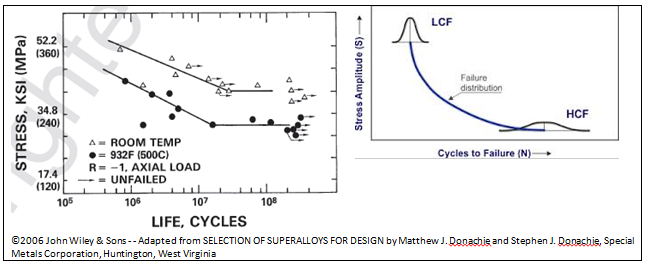

Now first let's first look at the most difficult and strategic aspect of a turbofan - namely the Turbines (and more precisely the HPT, to be followed by the LPT).

There are quite a few information that’s getting scattered across various threads and needs consolidation in one place in this thread (for archival and ready reference).

First thing first, Shivji messed up his invaluable uploads from AI13 on Kaveri by moving the infoboards and images.

his invaluable uploads from AI13 on Kaveri by moving the infoboards and images.

They need to be linked from here – so here they are (credit and copyright completely goes to Shivji and Shivji alone).

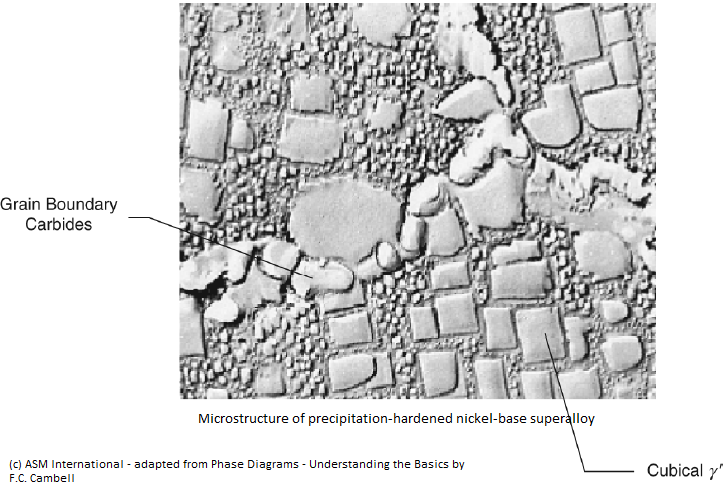

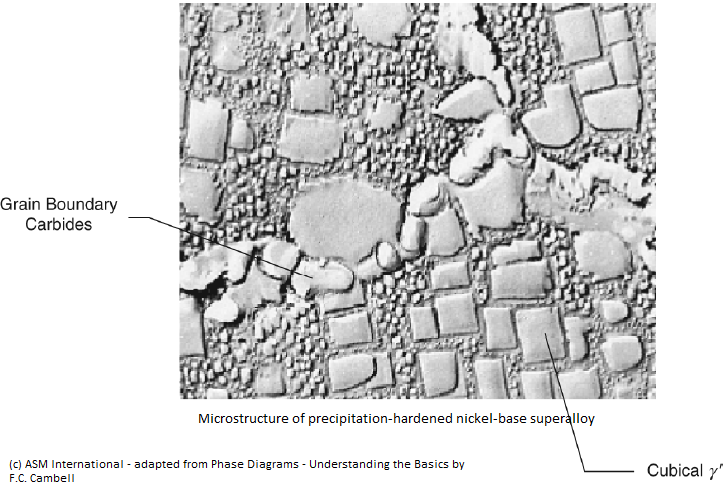

In a lay-man terms what does these images signify vis-à-vis GTREs Kaveri/Kabini development project. Well, from a 60K ft level, these images are the 1st proof of Single Crystal Blade manufacturing (or should I just leave it at development only) in India.

But at a slightly lower level, we still need to understand, of course purely from a lay-man’s pov, why is this SCB dev/design capability so important for us (from any indigenous military jet engine dev capability pov) - and, more importantly, where do we stand today (from open source info only) in achieving this capability.

So the remainder of this post is an attempt (must admit a bit audacious one) to detail this aspect from a purely lay-man pov.

So here goes:

First the problem stmt:

A typical turbine blade (a HPT blade to be precise) in a turbo-fan would be rotating at a 10K rpm in a 1600deg C (say) temperature operating environment – which would mean the tip is moving at approx 1200kmph.

So for a blade of say 10cm long and a radius of 0.5m, would mean 160MPA of pressure on the blade.

The fan-blade material (in the HP and LP Turbines) would thus need to be able to handle that kind of physical stress along with a 1600deg C thermal stress in order to extract work from the gas stream and convert into to mechanical energy in the form of a rotating shaft to turn the upstream compressors and fans. Plus they need to also have adequate oxidation resistance and hot corrosion resistance at those operating temperature.

A very tall order.

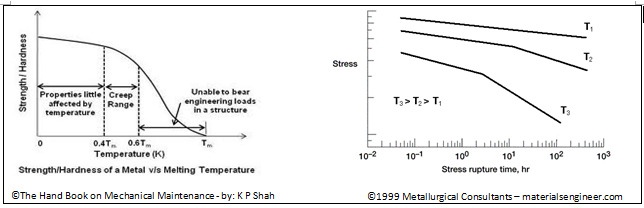

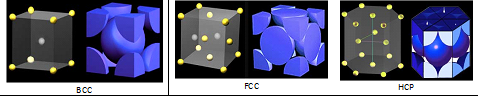

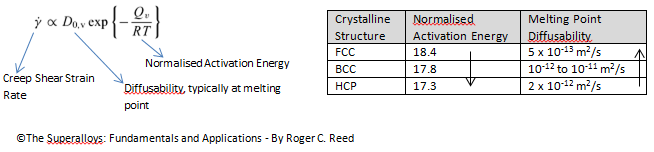

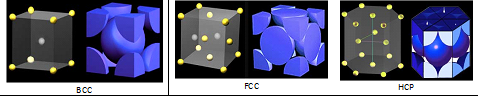

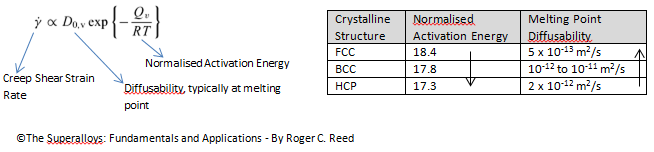

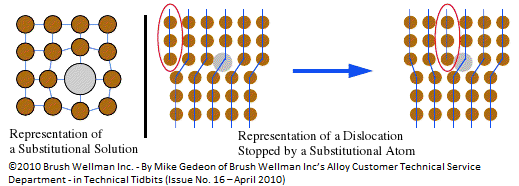

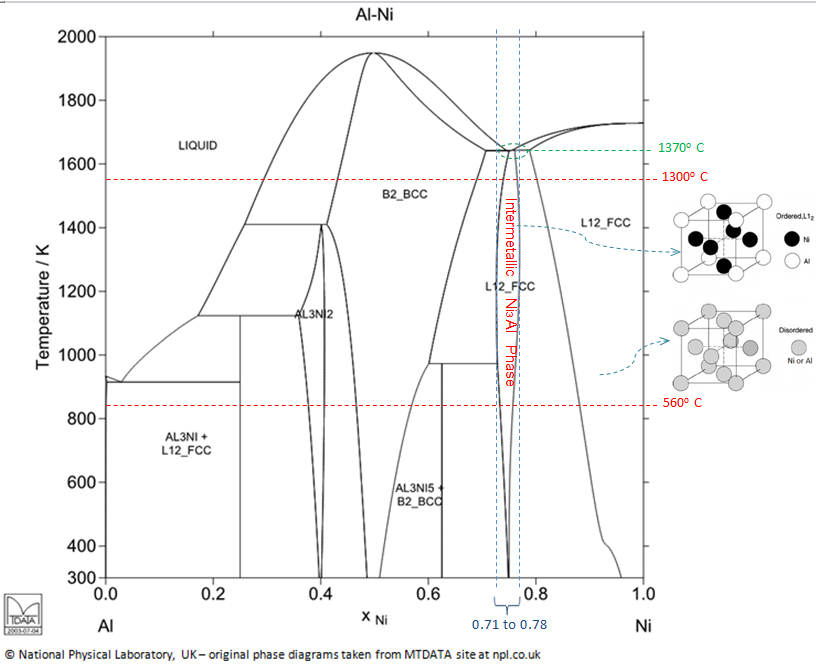

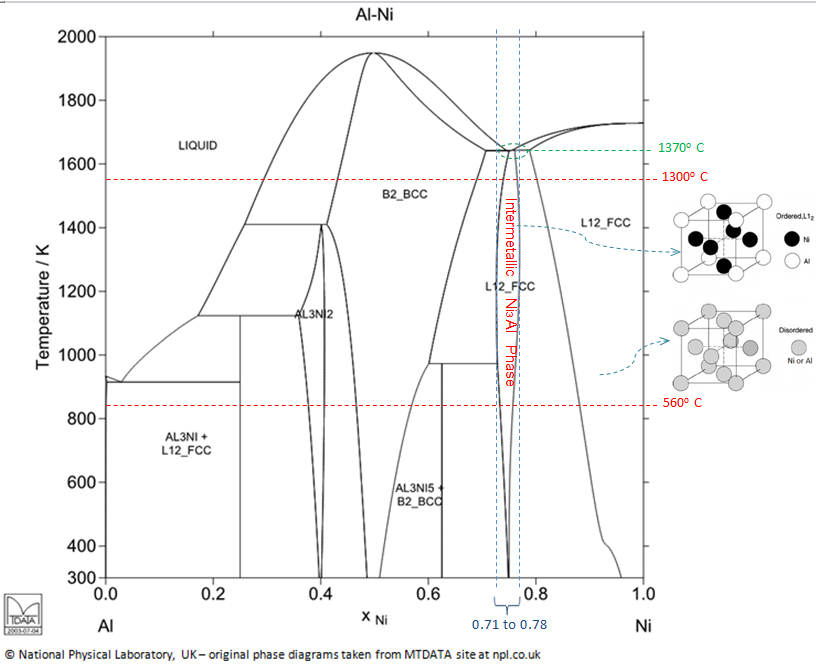

Blade Metallurgy Fundamentals:

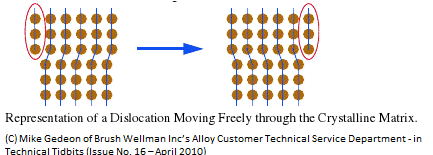

The blade metallurgy comes into picture there-in – pls refer to the images posted by Shivji from AI13.

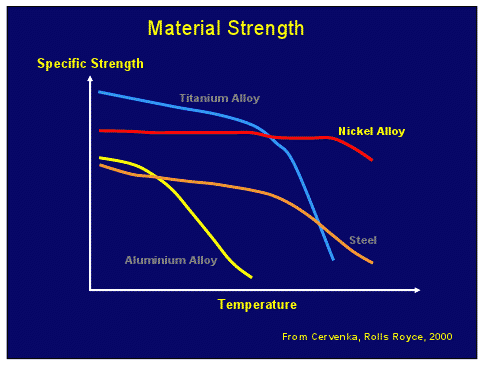

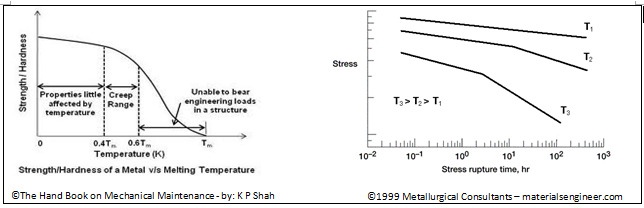

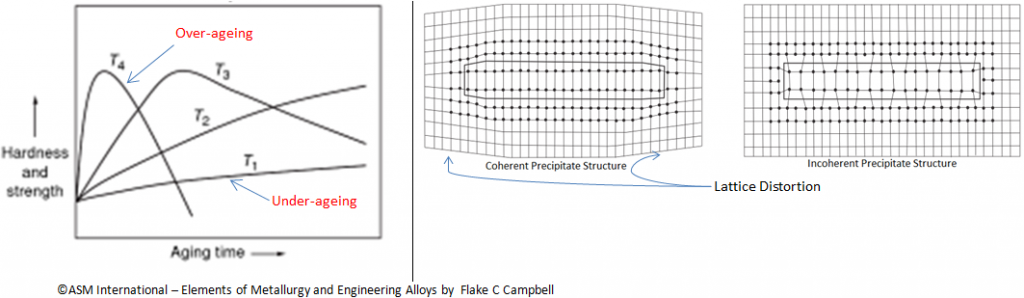

In the Equiaxed (aka traditional) blade, the grain boundaries are on both axis, longitudinal and transverse (aka both length and across), so under thermal and mechanical stress, creep propagation can happen in any direction - but mostly the failures happen radially, the traditional weak link in the microstructure.

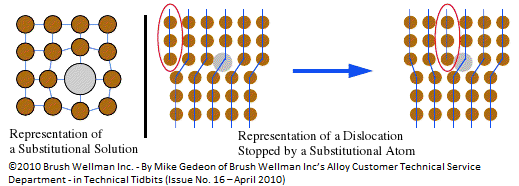

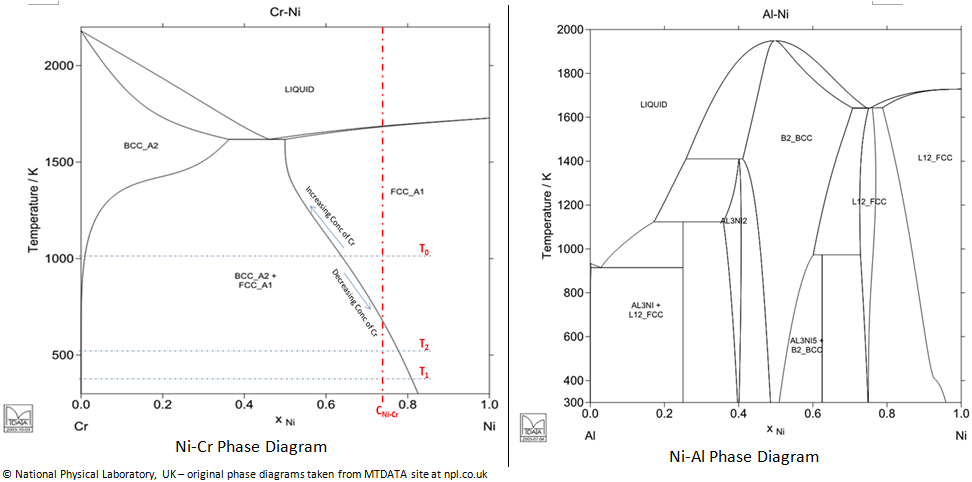

With Directionally Solidified (DS) the transverse grain boundaries are removed and columnar microstructures are formed -basically by carefully controlling the temperature gradient, a planar solidification front (across the cross-section of the foil) is first formed and then the whole blade is solidified by moving this planar front longitudinally across the length.

This results in multiple oriented grain structures running parallel to the major axis (aka parallel to the length of the blade) with no transverse (aka cross) grain boundaries. But these long grain boundaries being weak, it requires addition of Boron and Carbon (and hafnium and zirconium) impurities to be added to make them sufficiently strong against creep propagation.

This alignment of grain boundaries longitudinally (length-wise) confers substantial increase in creep life. DS provides as much as 10times more strain control or thermal fatigue compared to Equiaxed blades - plus the impact strength is also more (approx 33%) compared to Equiaxed blades.

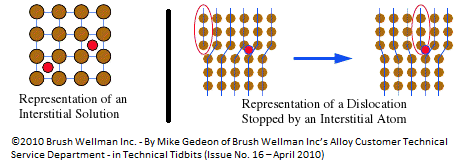

<<Insert Image from hdd>>

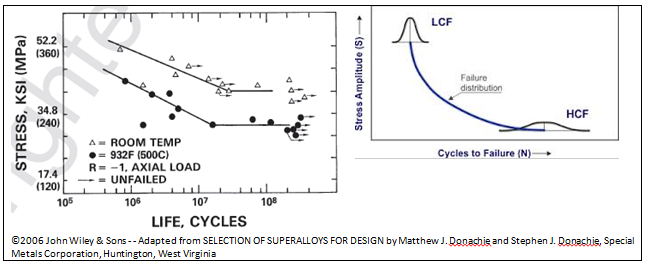

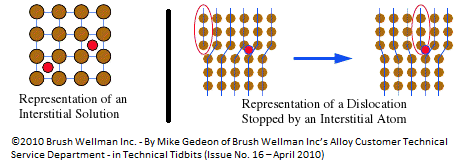

This schematic shows the DS and Single Crystalline (SC) casting process - In each case, molten super-alloy is poured into a ceramic mold seated on a water cooled copper chill. And grains are nucleated on the chill surface and are grown in a columnar manner parallel to the unidirectional temperature gradient – this is achieved by slowly moving the mold away from the furnace.

Pls notice that, SC blade creation process is almost identical to that of DS process but with one very important difference - that of the presence of grain selector (that helical structure at the bottom, kind of a filter which allows only 1 columnar microstructure to propagate longitudinally across the blade length).

As solidification proceeds, two to six grains enter the helix, (or grain selector) - some grains are physically blocked from entering the helix and the one or few that survive, have their horizontal dendrites most favorably positioned to enter the helix. After one or two turns of the helix only one crystal survives - this one grain emerges from the top of the selector, and this grain fills the entire mold cavity.

The helix wire diameter typically varies from 0.3 to 0.5 cm (0.1 to 0.2 inch).The single crystal production process uses the same vacuum furnaces as for columnar-grain castings (aka DS castings), but the temperature gradients in the furnaces have been increased significantly from about 36 to 72 deg C/cm.

Just to underline the importance of various blade metallurgy technologies, a 25 deg C improvement in metal temperature capability corresponds to a three-fold improvement in blade-life.

The SC myth:

But, the SCB all by itself is not the be all for achieving high TeT – in fact the 1st gen SCBs falls short of last-gen DS of approx. 1450deg C TeT by about 100deg C.